We will continue with installation of the grid infrastructure.

As a first thing I have created oracle_home directories for oracle and grid user.

Now, we need to set the ssh equivalence for both users:

[oracle@rac11gnode1 oracle]$ mkdir .ssh

[oracle@rac11gnode1 oracle]$ /usr/bin/ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_rsa):

Created directory '/home/oracle/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_rsa.

Your public key has been saved in /home/oracle/.ssh/id_rsa.pub.

The key fingerprint is:

c5:89:b4:1a:b7:05:ec:de:a4:8c:49:27:1c:9c:b9:af [email protected]

The key's randomart image is:

+--[ RSA 2048]----+

| . +o |

| =..= . |

| ..++ = |

| =+o+. |

| ..OS+ |

| o = . |

| . |

| E |

| |

+-----------------+

[oracle@rac11gnode1 oracle]$

[oracle@rac11gnode1 oracle]$ /usr/bin/ssh-keygen -t dsa

Generating public/private dsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_dsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_dsa.

Your public key has been saved in /home/oracle/.ssh/id_dsa.pub.

The key fingerprint is:

bf:7d:b7:32:dc:53:a4:16:08:5f:77:10:f5:d2:52:2c [email protected]

The key's randomart image is:

+--[ DSA 1024]----+

| o=o|

| . Eo=|

| o ooo+|

| o .o.|

| S + |

| . o .|

| . ... .|

| o +.o.|

| . ..ooo|

+-----------------+

The same thing should be done for the grid user:

[grid@rac11gnode1 ~]$ ssh-keygen -t rsa

[grid@rac11gnode1 ~]$ ssh-keygen -t dsa

Now, we merge both generated files in to one file:

cat id_rsa.pub id_dsa.pub > rac11gnode2oracle.pub

Now copy the file to other host and copy it's content to the authorized_keys file:

[oracle@rac11gnode1 .ssh]$ scp rac11gnode1oracle.pub rac11gnode2:/home/oracle/.ssh/

The authenticity of host 'rac11gnode2 (192.168.0.113)' can't be established.

RSA key fingerprint is 01:30:3c:de:27:eb:e9:e3:28:4a:43:07:57:03:bb:0a.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac11gnode2,192.168.0.113' (RSA) to the list of known hosts.

oracle@rac11gnode2's password:

rac11gnode1oracle.pub 100% 1032 1.0KB/s 00:00

[oracle@rac11gnode1 .ssh]$ su - grid

Password:

[grid@rac11gnode1 ~]$ cd .ssh

[grid@rac11gnode1 .ssh]$ scp rac11gnode1grid.pub rac11gnode2:/home/grid/.ssh/

The authenticity of host 'rac11gnode2 (192.168.0.113)' can't be established.

RSA key fingerprint is 01:30:3c:de:27:eb:e9:e3:28:4a:43:07:57:03:bb:0a.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac11gnode2,192.168.0.113' (RSA) to the list of known hosts.

grid@rac11gnode2's password:

rac11gnode1grid.pub 100% 1028 1.0KB/s 00:00

[oracle@rac11gnode2 .ssh]$ cat rac11gnode1oracle.pub > authorized_keys

[grid@rac11gnode2 .ssh]$ cat rac11gnode1grid.pub > authorized_keys

now you can test the ssh connection. It should work without password. You should test it also for the connection to the same host. After this, the servernames should be stored in the known_hosts file, so during the next ssh connection you should be able to connect without any messages being written to the standard output.

Now copy the installation files to first node, unzip it and run cluster verification utility:

./runcluvfy.sh stage -pre crsinst -n rac11gnode1,rac11gnode2 -verbose

Check if all checks are passed.

I needed to add the grid user to dba group, add the -x parameter for the ntpd daemon.

Only problem I couldn't solve was PRVF-5637 : DNS response time could not be checked on following nodes: rac11gnode2,rac11gnode1. On the metalink I found that it's a bug on Red Hat 6.4 and I should check it manually.

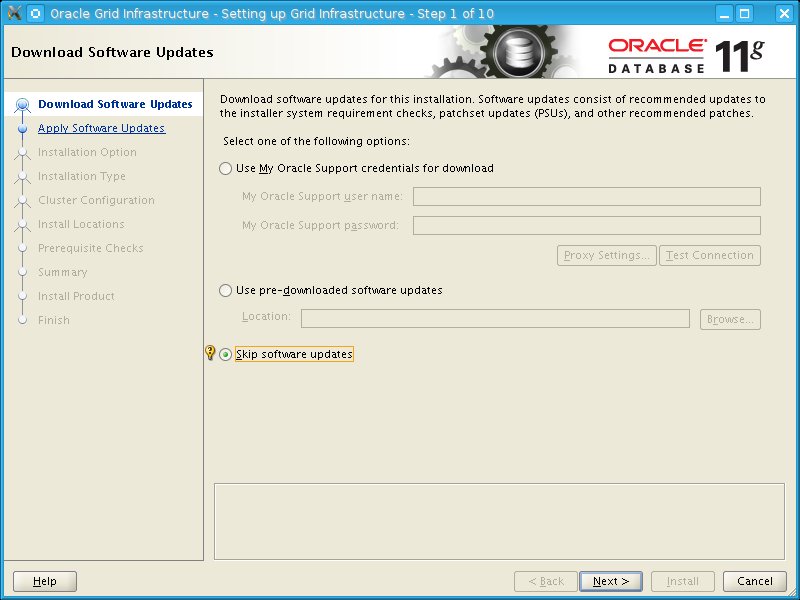

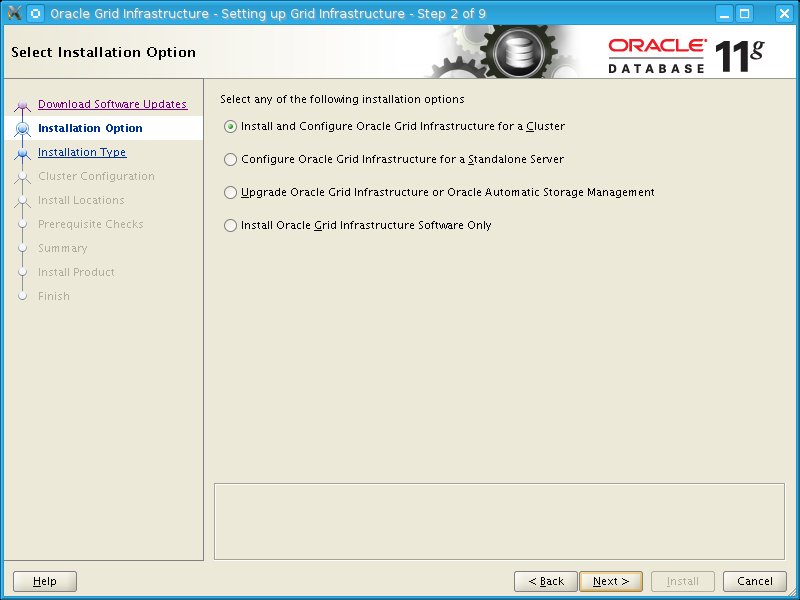

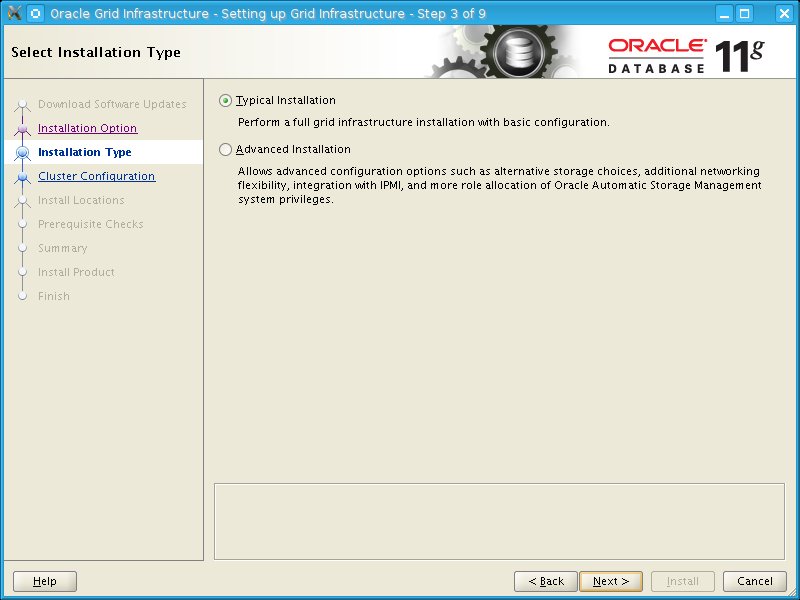

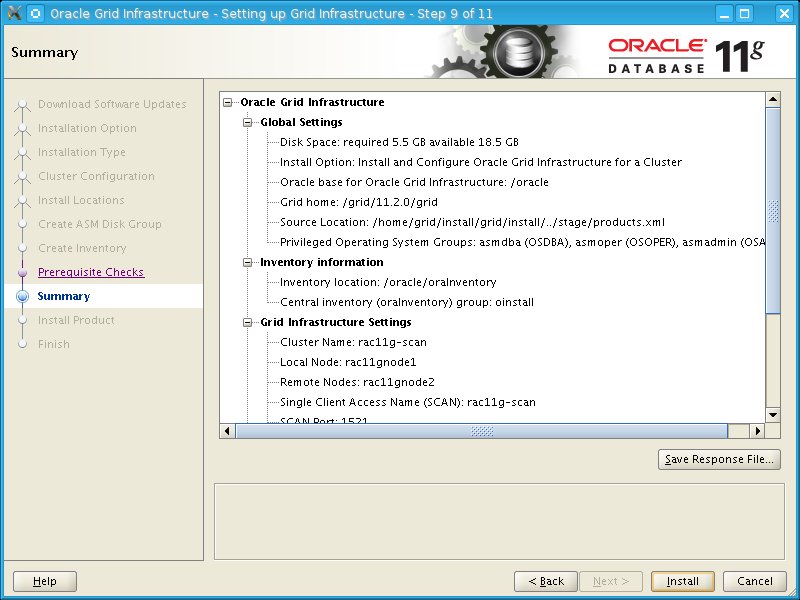

So now, when all checks were passed, I can start the installation.

Now, we need to set the ssh equivalence for both users:

[oracle@rac11gnode1 oracle]$ mkdir .ssh

[oracle@rac11gnode1 oracle]$ /usr/bin/ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_rsa):

Created directory '/home/oracle/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_rsa.

Your public key has been saved in /home/oracle/.ssh/id_rsa.pub.

The key fingerprint is:

c5:89:b4:1a:b7:05:ec:de:a4:8c:49:27:1c:9c:b9:af [email protected]

The key's randomart image is:

+--[ RSA 2048]----+

| . +o |

| =..= . |

| ..++ = |

| =+o+. |

| ..OS+ |

| o = . |

| . |

| E |

| |

+-----------------+

[oracle@rac11gnode1 oracle]$

[oracle@rac11gnode1 oracle]$ /usr/bin/ssh-keygen -t dsa

Generating public/private dsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_dsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_dsa.

Your public key has been saved in /home/oracle/.ssh/id_dsa.pub.

The key fingerprint is:

bf:7d:b7:32:dc:53:a4:16:08:5f:77:10:f5:d2:52:2c [email protected]

The key's randomart image is:

+--[ DSA 1024]----+

| o=o|

| . Eo=|

| o ooo+|

| o .o.|

| S + |

| . o .|

| . ... .|

| o +.o.|

| . ..ooo|

+-----------------+

The same thing should be done for the grid user:

[grid@rac11gnode1 ~]$ ssh-keygen -t rsa

[grid@rac11gnode1 ~]$ ssh-keygen -t dsa

Now, we merge both generated files in to one file:

cat id_rsa.pub id_dsa.pub > rac11gnode2oracle.pub

Now copy the file to other host and copy it's content to the authorized_keys file:

[oracle@rac11gnode1 .ssh]$ scp rac11gnode1oracle.pub rac11gnode2:/home/oracle/.ssh/

The authenticity of host 'rac11gnode2 (192.168.0.113)' can't be established.

RSA key fingerprint is 01:30:3c:de:27:eb:e9:e3:28:4a:43:07:57:03:bb:0a.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac11gnode2,192.168.0.113' (RSA) to the list of known hosts.

oracle@rac11gnode2's password:

rac11gnode1oracle.pub 100% 1032 1.0KB/s 00:00

[oracle@rac11gnode1 .ssh]$ su - grid

Password:

[grid@rac11gnode1 ~]$ cd .ssh

[grid@rac11gnode1 .ssh]$ scp rac11gnode1grid.pub rac11gnode2:/home/grid/.ssh/

The authenticity of host 'rac11gnode2 (192.168.0.113)' can't be established.

RSA key fingerprint is 01:30:3c:de:27:eb:e9:e3:28:4a:43:07:57:03:bb:0a.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'rac11gnode2,192.168.0.113' (RSA) to the list of known hosts.

grid@rac11gnode2's password:

rac11gnode1grid.pub 100% 1028 1.0KB/s 00:00

[oracle@rac11gnode2 .ssh]$ cat rac11gnode1oracle.pub > authorized_keys

[grid@rac11gnode2 .ssh]$ cat rac11gnode1grid.pub > authorized_keys

now you can test the ssh connection. It should work without password. You should test it also for the connection to the same host. After this, the servernames should be stored in the known_hosts file, so during the next ssh connection you should be able to connect without any messages being written to the standard output.

Now copy the installation files to first node, unzip it and run cluster verification utility:

./runcluvfy.sh stage -pre crsinst -n rac11gnode1,rac11gnode2 -verbose

Check if all checks are passed.

I needed to add the grid user to dba group, add the -x parameter for the ntpd daemon.

Only problem I couldn't solve was PRVF-5637 : DNS response time could not be checked on following nodes: rac11gnode2,rac11gnode1. On the metalink I found that it's a bug on Red Hat 6.4 and I should check it manually.

So now, when all checks were passed, I can start the installation.

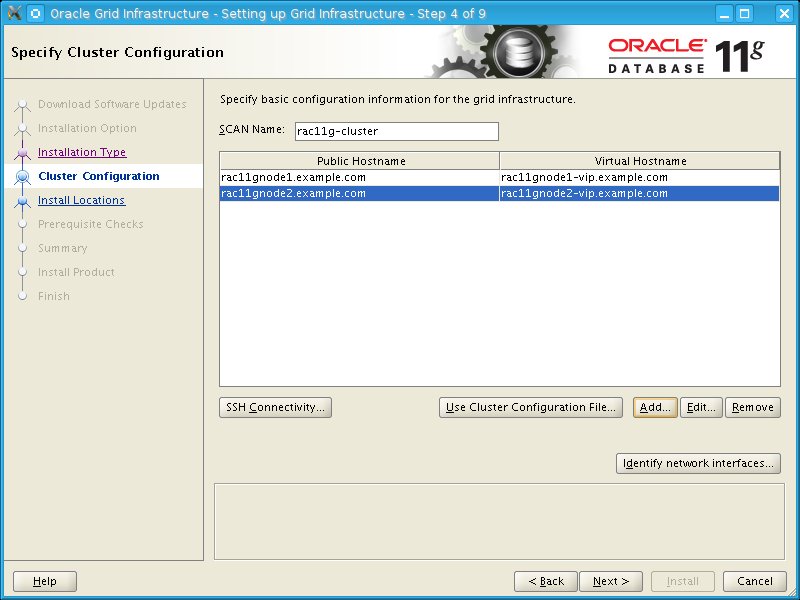

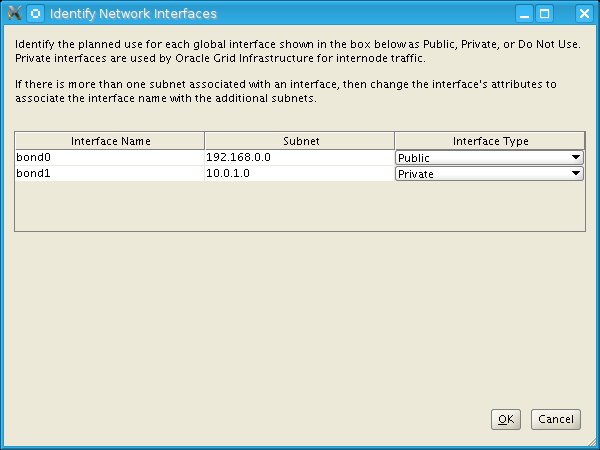

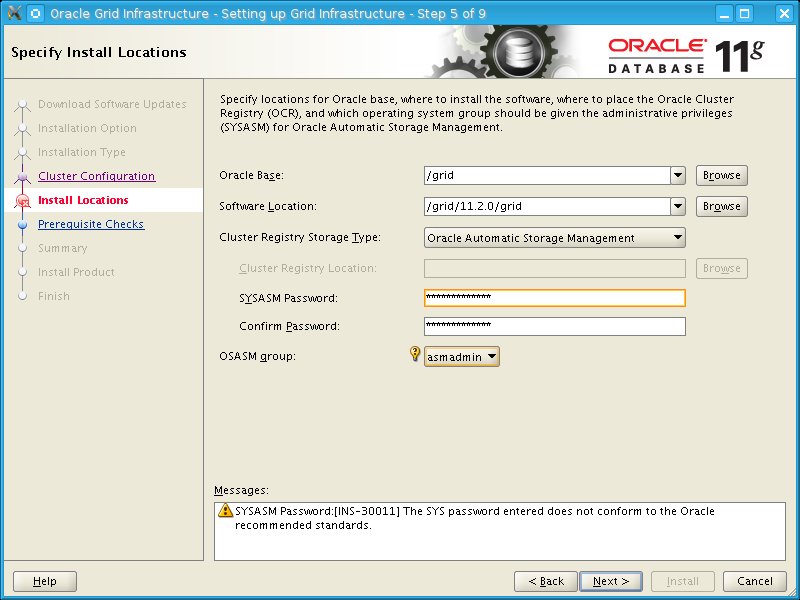

Add the second cluster node and choose the SCAN node you have added to the DNS:

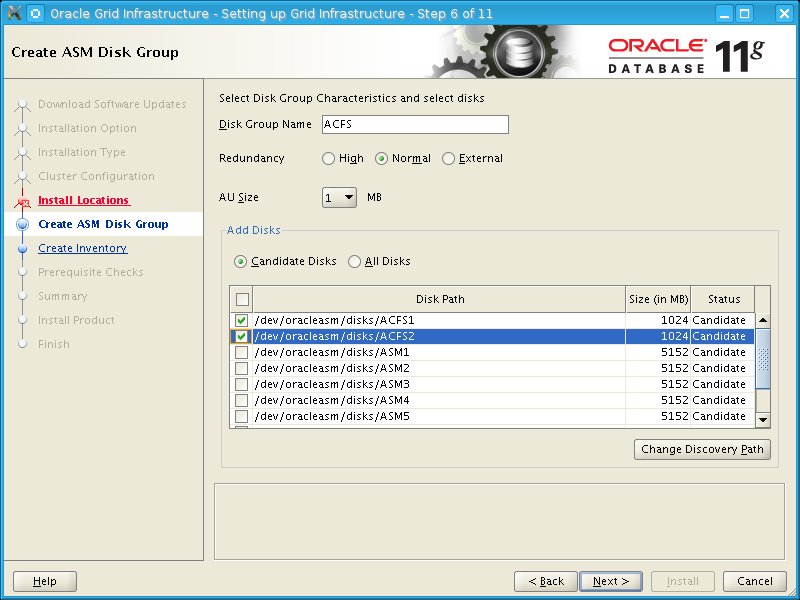

Choose the disks for the ACFS filesystem where ocr and voting disk will be stored:

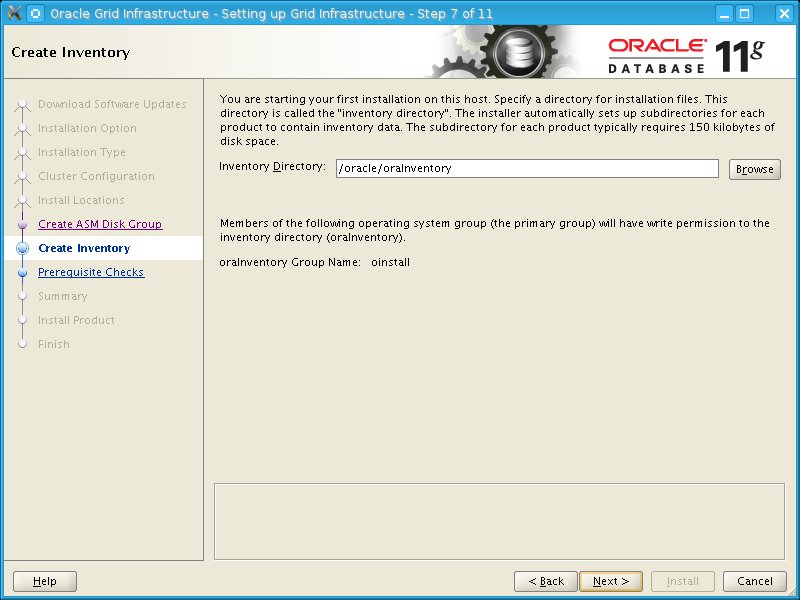

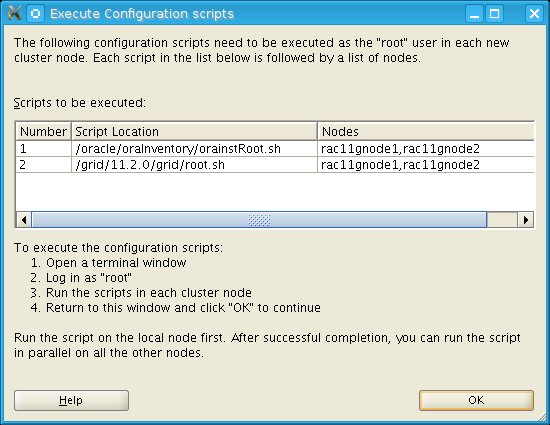

Now run the orainstRoot on both nodes and then root.sh on both nodes.

Installation is finished.

You can check the resources and crs status:

[grid@rac11gnode1 ~]$ crs_stat -t

Name Type Target State Host

------------------------------------------------------------

ora.ACFS.dg ora....up.type ONLINE ONLINE rac11gnode1

ora....ER.lsnr ora....er.type ONLINE ONLINE rac11gnode1

ora....N1.lsnr ora....er.type ONLINE ONLINE rac11gnode2

ora....N2.lsnr ora....er.type ONLINE ONLINE rac11gnode1

ora....N3.lsnr ora....er.type ONLINE ONLINE rac11gnode1

ora.asm ora.asm.type ONLINE ONLINE rac11gnode1

ora.cvu ora.cvu.type ONLINE ONLINE rac11gnode1

ora.gsd ora.gsd.type OFFLINE OFFLINE

ora....network ora....rk.type ONLINE ONLINE rac11gnode1

ora.oc4j ora.oc4j.type ONLINE ONLINE rac11gnode1

ora.ons ora.ons.type ONLINE ONLINE rac11gnode1

ora....SM1.asm application ONLINE ONLINE rac11gnode1

ora....E1.lsnr application ONLINE ONLINE rac11gnode1

ora....de1.gsd application OFFLINE OFFLINE

ora....de1.ons application ONLINE ONLINE rac11gnode1

ora....de1.vip ora....t1.type ONLINE ONLINE rac11gnode1

ora....SM2.asm application ONLINE ONLINE rac11gnode2

ora....E2.lsnr application ONLINE ONLINE rac11gnode2

ora....de2.gsd application OFFLINE OFFLINE

ora....de2.ons application ONLINE ONLINE rac11gnode2

ora....de2.vip ora....t1.type ONLINE ONLINE rac11gnode2

ora.scan1.vip ora....ip.type ONLINE ONLINE rac11gnode2

ora.scan2.vip ora....ip.type ONLINE ONLINE rac11gnode1

ora.scan3.vip ora....ip.type ONLINE ONLINE rac11gnode1

[grid@rac11gnode1 bin]$ crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

Now, we will create disk group for the data and disk group for FRA.

Check existing diskgroups:

[grid@rac11gnode1 bin]$ sqlplus "/as sysasm"

SQL*Plus: Release 11.2.0.3.0 Production on Mon Apr 8 20:30:19 2013

Copyright (c) 1982, 2011, Oracle. All rights reserved.

Connected to:

Oracle Database 11g Enterprise Edition Release 11.2.0.3.0 - 64bit Production

With the Real Application Clusters and Automatic Storage Management options

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS 2048 1650

Check available disks:

SQL> select path,os_mb,state from v$asm_disk;

PATH OS_MB STATE

-------------------------------------------------- ---------- --------

/dev/oracleasm/disks/ASM8 7424 NORMAL

/dev/oracleasm/disks/ASM7 10240 NORMAL

/dev/oracleasm/disks/ASM6 5152 NORMAL

/dev/oracleasm/disks/ASM5 5152 NORMAL

/dev/oracleasm/disks/ASM4 5152 NORMAL

/dev/oracleasm/disks/ASM1 5152 NORMAL

/dev/oracleasm/disks/ASM3 5152 NORMAL

/dev/oracleasm/disks/ASM2 5152 NORMAL

/dev/oracleasm/disks/ACFS2 1024 NORMAL

/dev/oracleasm/disks/ACFS1 1024 NORMAL

Choose disks and create the diskgroup for datafiles:

SQL> create diskgroup data external redundancy DISK '/dev/oracleasm/disks/ASM1','/dev/oracleasm/disks/ASM2','/dev/oracleasm/disks/ASM3';

Diskgroup created.

Now check the available diskgroups:

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS 2048 1650

DATA 15456 15402

Use the same procedure to create the FRA:

SQL> create diskgroup fra external redundancy disk '/dev/oracleasm/disks/ASM7';

Diskgroup created.

Check diskgroups again:

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS 2048 1650

DATA 15456 15402

FRA 10240 10190

Now, we can apply the lates PSU patch onf the GRID (11.2.0.3.5).

Copy patches p6880880_112000_Linux-x86-64.zip and p14727347_112030_Linux-x86-64.zip to the servers.

Now, copy the p6880880 to the $ORACLE_HOME and unzip. Ith should replace the OPatch direcotry with the new version of opatch.

Unzip the p14727347 patch.

Create ocm response file:

[grid@rac11gnode1 11.2.0]$ cd OPatch/ocm/bin/

[grid@rac11gnode1 bin]$ ./emocmrsp -no_banner

Provide your email address to be informed of security issues, install and

initiate Oracle Configuration Manager. Easier for you if you use your My

Oracle Support Email address/User Name.

Visit http://www.oracle.com/support/policies.html for details.

Email address/User Name:

You have not provided an email address for notification of security issues.

Do you wish to remain uninformed of security issues ([Y]es, [N]o) [N]: Y

The OCM configuration response file (ocm.rsp) was successfully created.

[root@rac11gnode1 ~]# opatch auto /home/grid/install/psu/ -oh /grid/grid/11.2.0/ -ocmrf /grid/grid/11.2.0/OPatch/ocm/bin/ocm.rsp

Executing /grid/grid/11.2.0/perl/bin/perl /grid/grid/11.2.0/OPatch/crs/patch11203.pl -patchdir /home/grid/install -patchn psu -oh /grid/grid/11.2.0/ -ocmrf /grid/grid/11.2.0/OPatch/ocm/bin/ocm.rsp -paramfile /grid/grid/11.2.0/crs/install/crsconfig_params

/grid/grid/11.2.0/crs/install/crsconfig_params

/grid/grid/11.2.0/crs/install/s_crsconfig_defs

This is the main log file: /grid/grid/11.2.0/cfgtoollogs/opatchauto2013-04-09_21-41-28.log

This file will show your detected configuration and all the steps that opatchauto attempted to do on your system: /grid/grid/11.2.0/cfgtoollogs/opatchauto2013-04-09_21-41-28.report.log

2013-04-09 21:41:28: Starting Clusterware Patch Setup

Using configuration parameter file: /grid/grid/11.2.0/crs/install/crsconfig_params

CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.crsd' on 'rac11gnode1'

CRS-2790: Starting shutdown of Cluster Ready Services-managed resources on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.oc4j' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.cvu' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.ACFS1.dg' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.LISTENER.lsnr' on 'rac11gnode1'

CRS-2677: Stop of 'ora.LISTENER.lsnr' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.rac11gnode1.vip' on 'rac11gnode1'

CRS-2677: Stop of 'ora.rac11gnode1.vip' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.rac11gnode1.vip' on 'rac11gnode2'

CRS-2677: Stop of 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.scan3.vip' on 'rac11gnode1'

CRS-2677: Stop of 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.scan2.vip' on 'rac11gnode1'

CRS-2676: Start of 'ora.rac11gnode1.vip' on 'rac11gnode2' succeeded

CRS-2677: Stop of 'ora.scan2.vip' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.scan2.vip' on 'rac11gnode2'

CRS-2677: Stop of 'ora.scan3.vip' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.scan3.vip' on 'rac11gnode2'

CRS-2676: Start of 'ora.scan2.vip' on 'rac11gnode2' succeeded

CRS-2676: Start of 'ora.scan3.vip' on 'rac11gnode2' succeeded

CRS-2672: Attempting to start 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode2'

CRS-2672: Attempting to start 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode2'

CRS-2676: Start of 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode2' succeeded

CRS-2676: Start of 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode2' succeeded

CRS-2677: Stop of 'ora.oc4j' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.oc4j' on 'rac11gnode2'

CRS-2677: Stop of 'ora.cvu' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.cvu' on 'rac11gnode2'

CRS-2676: Start of 'ora.cvu' on 'rac11gnode2' succeeded

CRS-2676: Start of 'ora.oc4j' on 'rac11gnode2' succeeded

CRS-2677: Stop of 'ora.ACFS1.dg' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.asm' on 'rac11gnode1'

CRS-2677: Stop of 'ora.asm' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.ons' on 'rac11gnode1'

CRS-2677: Stop of 'ora.ons' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.net1.network' on 'rac11gnode1'

CRS-2677: Stop of 'ora.net1.network' on 'rac11gnode1' succeeded

CRS-2792: Shutdown of Cluster Ready Services-managed resources on 'rac11gnode1' has completed

CRS-2677: Stop of 'ora.crsd' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.ctssd' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.evmd' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.asm' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.mdnsd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.evmd' on 'rac11gnode1' succeeded

CRS-2677: Stop of 'ora.mdnsd' on 'rac11gnode1' succeeded

CRS-2677: Stop of 'ora.ctssd' on 'rac11gnode1' succeeded

CRS-2677: Stop of 'ora.asm' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.cluster_interconnect.haip' on 'rac11gnode1'

CRS-2677: Stop of 'ora.cluster_interconnect.haip' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.cssd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.cssd' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.crf' on 'rac11gnode1'

CRS-2677: Stop of 'ora.crf' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.gipcd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.gipcd' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.gpnpd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.gpnpd' on 'rac11gnode1' succeeded

CRS-2793: Shutdown of Oracle High Availability Services-managed resources on 'rac11gnode1' has completed

CRS-4133: Oracle High Availability Services has been stopped.

Successfully unlock /grid/grid/11.2.0

patch /home/grid/install/psu/15876003 apply successful for home /grid/grid/11.2.0

patch /home/grid/install/psu/14727310 apply successful for home /grid/grid/11.2.0

CRS-4123: Oracle High Availability Services has been started.

Check all applied patches:

[grid@rac11gnode1 OPatch]$ ./opatch lsinventory

Oracle Interim Patch Installer version 11.2.0.3.3

Copyright (c) 2012, Oracle Corporation. All rights reserved.

Oracle Home : /grid/grid/11.2.0

Central Inventory : /grid/oraInventory

from : /grid/grid/11.2.0/oraInst.loc

OPatch version : 11.2.0.3.3

OUI version : 11.2.0.3.0

Log file location : /grid/grid/11.2.0/cfgtoollogs/opatch/opatch2013-04-09_22-23-20PM_1.log

Lsinventory Output file location : /grid/grid/11.2.0/cfgtoollogs/opatch/lsinv/lsinventory2013-04-09_22-23-20PM.txt

--------------------------------------------------------------------------------

Installed Top-level Products (1):

Oracle Grid Infrastructure 11.2.0.3.0

There are 1 products installed in this Oracle Home.

Interim patches (2) :

Patch 14727310 : applied on Tue Apr 09 21:50:44 CEST 2013

Unique Patch ID: 15663328

Patch description: "Database Patch Set Update : 11.2.0.3.5 (14727310)"

Created on 27 Dec 2012, 00:06:30 hrs PST8PDT

Sub-patch 14275605; "Database Patch Set Update : 11.2.0.3.4 (14275605)"

Sub-patch 13923374; "Database Patch Set Update : 11.2.0.3.3 (13923374)"

Sub-patch 13696216; "Database Patch Set Update : 11.2.0.3.2 (13696216)"

Sub-patch 13343438; "Database Patch Set Update : 11.2.0.3.1 (13343438)"

Bugs fixed:

13566938, 13593999, 10350832, 14138130, 12919564, 13624984, 13588248

13080778, 13804294, 14258925, 12873183, 13645875, 12880299, 14664355

14409183, 12998795, 14469008, 13719081, 13492735, 12857027, 14263036

14263073, 13742433, 13732226, 12905058, 13742434, 12849688, 12950644

13742435, 13464002, 12879027, 13534412, 14613900, 12585543, 12535346

12588744, 11877623, 12847466, 13649031, 13981051, 12582664, 12797765

14262913, 12923168, 13612575, 13384182, 13466801, 13484963, 11063191

13772618, 13070939, 12797420, 13041324, 12976376, 11708510, 13742437

13026410, 13737746, 13742438, 13326736, 13001379, 13099577, 14275605

13742436, 9873405, 9858539, 14040433, 12662040, 9703627, 12617123

12845115, 12764337, 13354082, 13397104, 12964067, 13550185, 12780983

12583611, 14546575, 13476583, 15862016, 11840910, 13903046, 15862017

13572659, 13718279, 13657605, 13448206, 13419660, 14480676, 13632717

14063281, 13430938, 13467683, 13420224, 14548763, 12646784, 14035825

12861463, 12834027, 15862021, 13377816, 13036331, 14727310, 13685544

13499128, 15862018, 12829021, 15862019, 12794305, 14546673, 12791981

13503598, 13787482, 10133521, 12718090, 13399435, 14023636, 12401111

13257247, 13362079, 12917230, 13923374, 14480675, 13524899, 13559697

14480674, 13916709, 14076523, 13773133, 13340388, 13366202, 13528551

12894807, 13343438, 13454210, 12748240, 14205448, 13385346, 15853081

12971775, 13035804, 13544396, 13035360, 14062795, 12693626, 13332439

14038787, 14062796, 12913474, 14841409, 14390252, 13370330, 14062797

13059165, 14062794, 12959852, 13358781, 12345082, 12960925, 9659614

13699124, 14546638, 13936424, 13338048, 12938841, 12658411, 12620823

12656535, 14062793, 12678920, 13038684, 14062792, 13807411, 12594032

13250244, 15862022, 9761357, 12612118, 13742464, 14052474, 13457582

13527323, 15862020, 12780098, 13502183, 13705338, 13696216, 10263668

15862023, 13554409, 15862024, 13103913, 13645917, 14063280, 13011409

Patch 15876003 : applied on Tue Apr 09 21:48:34 CEST 2013

Unique Patch ID: 15680307

Patch description: "Grid Infrastructure Patch Set Update : 11.2.0.3.5 (14727347)"

Created on 11 Jan 2013, 06:19:07 hrs PST8PDT

Bugs fixed:

15876003, 14275572, 13919095, 13696251, 13348650, 12659561, 13039908

14277586, 13987807, 14625969, 13938166, 13825231, 13036424, 12794268

13011520, 13569812, 12758736, 13000491, 13498267, 13077654, 13001901

13550689, 13430715, 13806545, 13634583, 11675721, 14082976, 14271305

12771830, 12538907, 13947200, 12996428, 14102704, 13066371, 13483672

12594616, 13879428, 13540563, 12897651, 12897902, 13241779, 12896850

12726222, 12829429, 12728585, 13079948, 12876314, 13090686, 12925041

12995950, 13251796, 12650672, 12398492, 12848480, 13582411, 13652088

12990582, 13857364, 12975811, 12917897, 13653178, 13082238, 12947871

13037709, 13371153, 12878750, 10114953, 11772838, 13058611, 13001955

14001941, 11836951, 12965049, 13440962, 12765467, 13727853, 13425727

12885323, 14407395, 13965075, 13339443, 12784559, 14242977, 13332363

13074261, 12971251, 13811209, 12709476, 14168708, 14096821, 13993634

13460353, 13523527, 12857064, 13719731, 13396284, 12899169, 13111013

12558569, 13323698, 12867511, 12639013, 10260842, 12959140, 13085732

12829917, 10317921, 13843080, 12934171, 12849377, 12349553, 13924431

13869978, 12680491, 12914824, 13789135, 12730342, 13334158, 12950823

10418841, 12832204, 13355963, 13531373, 13776758, 12720728, 13620816

13002015, 13023609, 13024624, 12791719, 13886023, 13255295, 13821454

12782756, 14152875, 14100232, 14186070, 14569263, 13912373, 12873909

13845120, 14214257, 12914722, 13243172, 12842804, 13045518, 12765868

12772345, 12663376, 13345868, 14059576, 13683090, 12932852, 13889047

12695029, 14588629, 13146560, 13038806, 14251904, 14070200, 13820621

14304758, 13396356, 13697828, 13258062, 12834777, 14371335, 12996572

13941934, 14711358, 13657366, 13019958, 12810890, 13888719, 14637577

13502441, 13726162, 13880925, 14153867, 13506114, 12820045, 13604057

12823838, 13877508, 12823042, 14494305, 13582706, 13617861, 12825835

13263435, 13025879, 13853089, 14009845, 13410987, 13570879, 13637590

12827493, 13247273, 13068077

Rac system comprising of multiple nodes

Local node = rac11gnode1

Remote node = rac11gnode2

--------------------------------------------------------------------------------

OPatch succeeded.

Repeat the steps also on the second node.

Now we can start with the oracle software installation and creation of the database.

You can check the resources and crs status:

[grid@rac11gnode1 ~]$ crs_stat -t

Name Type Target State Host

------------------------------------------------------------

ora.ACFS.dg ora....up.type ONLINE ONLINE rac11gnode1

ora....ER.lsnr ora....er.type ONLINE ONLINE rac11gnode1

ora....N1.lsnr ora....er.type ONLINE ONLINE rac11gnode2

ora....N2.lsnr ora....er.type ONLINE ONLINE rac11gnode1

ora....N3.lsnr ora....er.type ONLINE ONLINE rac11gnode1

ora.asm ora.asm.type ONLINE ONLINE rac11gnode1

ora.cvu ora.cvu.type ONLINE ONLINE rac11gnode1

ora.gsd ora.gsd.type OFFLINE OFFLINE

ora....network ora....rk.type ONLINE ONLINE rac11gnode1

ora.oc4j ora.oc4j.type ONLINE ONLINE rac11gnode1

ora.ons ora.ons.type ONLINE ONLINE rac11gnode1

ora....SM1.asm application ONLINE ONLINE rac11gnode1

ora....E1.lsnr application ONLINE ONLINE rac11gnode1

ora....de1.gsd application OFFLINE OFFLINE

ora....de1.ons application ONLINE ONLINE rac11gnode1

ora....de1.vip ora....t1.type ONLINE ONLINE rac11gnode1

ora....SM2.asm application ONLINE ONLINE rac11gnode2

ora....E2.lsnr application ONLINE ONLINE rac11gnode2

ora....de2.gsd application OFFLINE OFFLINE

ora....de2.ons application ONLINE ONLINE rac11gnode2

ora....de2.vip ora....t1.type ONLINE ONLINE rac11gnode2

ora.scan1.vip ora....ip.type ONLINE ONLINE rac11gnode2

ora.scan2.vip ora....ip.type ONLINE ONLINE rac11gnode1

ora.scan3.vip ora....ip.type ONLINE ONLINE rac11gnode1

[grid@rac11gnode1 bin]$ crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

Now, we will create disk group for the data and disk group for FRA.

Check existing diskgroups:

[grid@rac11gnode1 bin]$ sqlplus "/as sysasm"

SQL*Plus: Release 11.2.0.3.0 Production on Mon Apr 8 20:30:19 2013

Copyright (c) 1982, 2011, Oracle. All rights reserved.

Connected to:

Oracle Database 11g Enterprise Edition Release 11.2.0.3.0 - 64bit Production

With the Real Application Clusters and Automatic Storage Management options

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS 2048 1650

Check available disks:

SQL> select path,os_mb,state from v$asm_disk;

PATH OS_MB STATE

-------------------------------------------------- ---------- --------

/dev/oracleasm/disks/ASM8 7424 NORMAL

/dev/oracleasm/disks/ASM7 10240 NORMAL

/dev/oracleasm/disks/ASM6 5152 NORMAL

/dev/oracleasm/disks/ASM5 5152 NORMAL

/dev/oracleasm/disks/ASM4 5152 NORMAL

/dev/oracleasm/disks/ASM1 5152 NORMAL

/dev/oracleasm/disks/ASM3 5152 NORMAL

/dev/oracleasm/disks/ASM2 5152 NORMAL

/dev/oracleasm/disks/ACFS2 1024 NORMAL

/dev/oracleasm/disks/ACFS1 1024 NORMAL

Choose disks and create the diskgroup for datafiles:

SQL> create diskgroup data external redundancy DISK '/dev/oracleasm/disks/ASM1','/dev/oracleasm/disks/ASM2','/dev/oracleasm/disks/ASM3';

Diskgroup created.

Now check the available diskgroups:

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS 2048 1650

DATA 15456 15402

Use the same procedure to create the FRA:

SQL> create diskgroup fra external redundancy disk '/dev/oracleasm/disks/ASM7';

Diskgroup created.

Check diskgroups again:

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS 2048 1650

DATA 15456 15402

FRA 10240 10190

Now, we can apply the lates PSU patch onf the GRID (11.2.0.3.5).

Copy patches p6880880_112000_Linux-x86-64.zip and p14727347_112030_Linux-x86-64.zip to the servers.

Now, copy the p6880880 to the $ORACLE_HOME and unzip. Ith should replace the OPatch direcotry with the new version of opatch.

Unzip the p14727347 patch.

Create ocm response file:

[grid@rac11gnode1 11.2.0]$ cd OPatch/ocm/bin/

[grid@rac11gnode1 bin]$ ./emocmrsp -no_banner

Provide your email address to be informed of security issues, install and

initiate Oracle Configuration Manager. Easier for you if you use your My

Oracle Support Email address/User Name.

Visit http://www.oracle.com/support/policies.html for details.

Email address/User Name:

You have not provided an email address for notification of security issues.

Do you wish to remain uninformed of security issues ([Y]es, [N]o) [N]: Y

The OCM configuration response file (ocm.rsp) was successfully created.

[root@rac11gnode1 ~]# opatch auto /home/grid/install/psu/ -oh /grid/grid/11.2.0/ -ocmrf /grid/grid/11.2.0/OPatch/ocm/bin/ocm.rsp

Executing /grid/grid/11.2.0/perl/bin/perl /grid/grid/11.2.0/OPatch/crs/patch11203.pl -patchdir /home/grid/install -patchn psu -oh /grid/grid/11.2.0/ -ocmrf /grid/grid/11.2.0/OPatch/ocm/bin/ocm.rsp -paramfile /grid/grid/11.2.0/crs/install/crsconfig_params

/grid/grid/11.2.0/crs/install/crsconfig_params

/grid/grid/11.2.0/crs/install/s_crsconfig_defs

This is the main log file: /grid/grid/11.2.0/cfgtoollogs/opatchauto2013-04-09_21-41-28.log

This file will show your detected configuration and all the steps that opatchauto attempted to do on your system: /grid/grid/11.2.0/cfgtoollogs/opatchauto2013-04-09_21-41-28.report.log

2013-04-09 21:41:28: Starting Clusterware Patch Setup

Using configuration parameter file: /grid/grid/11.2.0/crs/install/crsconfig_params

CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.crsd' on 'rac11gnode1'

CRS-2790: Starting shutdown of Cluster Ready Services-managed resources on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.oc4j' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.cvu' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.ACFS1.dg' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.LISTENER.lsnr' on 'rac11gnode1'

CRS-2677: Stop of 'ora.LISTENER.lsnr' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.rac11gnode1.vip' on 'rac11gnode1'

CRS-2677: Stop of 'ora.rac11gnode1.vip' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.rac11gnode1.vip' on 'rac11gnode2'

CRS-2677: Stop of 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.scan3.vip' on 'rac11gnode1'

CRS-2677: Stop of 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.scan2.vip' on 'rac11gnode1'

CRS-2676: Start of 'ora.rac11gnode1.vip' on 'rac11gnode2' succeeded

CRS-2677: Stop of 'ora.scan2.vip' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.scan2.vip' on 'rac11gnode2'

CRS-2677: Stop of 'ora.scan3.vip' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.scan3.vip' on 'rac11gnode2'

CRS-2676: Start of 'ora.scan2.vip' on 'rac11gnode2' succeeded

CRS-2676: Start of 'ora.scan3.vip' on 'rac11gnode2' succeeded

CRS-2672: Attempting to start 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode2'

CRS-2672: Attempting to start 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode2'

CRS-2676: Start of 'ora.LISTENER_SCAN2.lsnr' on 'rac11gnode2' succeeded

CRS-2676: Start of 'ora.LISTENER_SCAN3.lsnr' on 'rac11gnode2' succeeded

CRS-2677: Stop of 'ora.oc4j' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.oc4j' on 'rac11gnode2'

CRS-2677: Stop of 'ora.cvu' on 'rac11gnode1' succeeded

CRS-2672: Attempting to start 'ora.cvu' on 'rac11gnode2'

CRS-2676: Start of 'ora.cvu' on 'rac11gnode2' succeeded

CRS-2676: Start of 'ora.oc4j' on 'rac11gnode2' succeeded

CRS-2677: Stop of 'ora.ACFS1.dg' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.asm' on 'rac11gnode1'

CRS-2677: Stop of 'ora.asm' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.ons' on 'rac11gnode1'

CRS-2677: Stop of 'ora.ons' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.net1.network' on 'rac11gnode1'

CRS-2677: Stop of 'ora.net1.network' on 'rac11gnode1' succeeded

CRS-2792: Shutdown of Cluster Ready Services-managed resources on 'rac11gnode1' has completed

CRS-2677: Stop of 'ora.crsd' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.ctssd' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.evmd' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.asm' on 'rac11gnode1'

CRS-2673: Attempting to stop 'ora.mdnsd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.evmd' on 'rac11gnode1' succeeded

CRS-2677: Stop of 'ora.mdnsd' on 'rac11gnode1' succeeded

CRS-2677: Stop of 'ora.ctssd' on 'rac11gnode1' succeeded

CRS-2677: Stop of 'ora.asm' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.cluster_interconnect.haip' on 'rac11gnode1'

CRS-2677: Stop of 'ora.cluster_interconnect.haip' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.cssd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.cssd' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.crf' on 'rac11gnode1'

CRS-2677: Stop of 'ora.crf' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.gipcd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.gipcd' on 'rac11gnode1' succeeded

CRS-2673: Attempting to stop 'ora.gpnpd' on 'rac11gnode1'

CRS-2677: Stop of 'ora.gpnpd' on 'rac11gnode1' succeeded

CRS-2793: Shutdown of Oracle High Availability Services-managed resources on 'rac11gnode1' has completed

CRS-4133: Oracle High Availability Services has been stopped.

Successfully unlock /grid/grid/11.2.0

patch /home/grid/install/psu/15876003 apply successful for home /grid/grid/11.2.0

patch /home/grid/install/psu/14727310 apply successful for home /grid/grid/11.2.0

CRS-4123: Oracle High Availability Services has been started.

Check all applied patches:

[grid@rac11gnode1 OPatch]$ ./opatch lsinventory

Oracle Interim Patch Installer version 11.2.0.3.3

Copyright (c) 2012, Oracle Corporation. All rights reserved.

Oracle Home : /grid/grid/11.2.0

Central Inventory : /grid/oraInventory

from : /grid/grid/11.2.0/oraInst.loc

OPatch version : 11.2.0.3.3

OUI version : 11.2.0.3.0

Log file location : /grid/grid/11.2.0/cfgtoollogs/opatch/opatch2013-04-09_22-23-20PM_1.log

Lsinventory Output file location : /grid/grid/11.2.0/cfgtoollogs/opatch/lsinv/lsinventory2013-04-09_22-23-20PM.txt

--------------------------------------------------------------------------------

Installed Top-level Products (1):

Oracle Grid Infrastructure 11.2.0.3.0

There are 1 products installed in this Oracle Home.

Interim patches (2) :

Patch 14727310 : applied on Tue Apr 09 21:50:44 CEST 2013

Unique Patch ID: 15663328

Patch description: "Database Patch Set Update : 11.2.0.3.5 (14727310)"

Created on 27 Dec 2012, 00:06:30 hrs PST8PDT

Sub-patch 14275605; "Database Patch Set Update : 11.2.0.3.4 (14275605)"

Sub-patch 13923374; "Database Patch Set Update : 11.2.0.3.3 (13923374)"

Sub-patch 13696216; "Database Patch Set Update : 11.2.0.3.2 (13696216)"

Sub-patch 13343438; "Database Patch Set Update : 11.2.0.3.1 (13343438)"

Bugs fixed:

13566938, 13593999, 10350832, 14138130, 12919564, 13624984, 13588248

13080778, 13804294, 14258925, 12873183, 13645875, 12880299, 14664355

14409183, 12998795, 14469008, 13719081, 13492735, 12857027, 14263036

14263073, 13742433, 13732226, 12905058, 13742434, 12849688, 12950644

13742435, 13464002, 12879027, 13534412, 14613900, 12585543, 12535346

12588744, 11877623, 12847466, 13649031, 13981051, 12582664, 12797765

14262913, 12923168, 13612575, 13384182, 13466801, 13484963, 11063191

13772618, 13070939, 12797420, 13041324, 12976376, 11708510, 13742437

13026410, 13737746, 13742438, 13326736, 13001379, 13099577, 14275605

13742436, 9873405, 9858539, 14040433, 12662040, 9703627, 12617123

12845115, 12764337, 13354082, 13397104, 12964067, 13550185, 12780983

12583611, 14546575, 13476583, 15862016, 11840910, 13903046, 15862017

13572659, 13718279, 13657605, 13448206, 13419660, 14480676, 13632717

14063281, 13430938, 13467683, 13420224, 14548763, 12646784, 14035825

12861463, 12834027, 15862021, 13377816, 13036331, 14727310, 13685544

13499128, 15862018, 12829021, 15862019, 12794305, 14546673, 12791981

13503598, 13787482, 10133521, 12718090, 13399435, 14023636, 12401111

13257247, 13362079, 12917230, 13923374, 14480675, 13524899, 13559697

14480674, 13916709, 14076523, 13773133, 13340388, 13366202, 13528551

12894807, 13343438, 13454210, 12748240, 14205448, 13385346, 15853081

12971775, 13035804, 13544396, 13035360, 14062795, 12693626, 13332439

14038787, 14062796, 12913474, 14841409, 14390252, 13370330, 14062797

13059165, 14062794, 12959852, 13358781, 12345082, 12960925, 9659614

13699124, 14546638, 13936424, 13338048, 12938841, 12658411, 12620823

12656535, 14062793, 12678920, 13038684, 14062792, 13807411, 12594032

13250244, 15862022, 9761357, 12612118, 13742464, 14052474, 13457582

13527323, 15862020, 12780098, 13502183, 13705338, 13696216, 10263668

15862023, 13554409, 15862024, 13103913, 13645917, 14063280, 13011409

Patch 15876003 : applied on Tue Apr 09 21:48:34 CEST 2013

Unique Patch ID: 15680307

Patch description: "Grid Infrastructure Patch Set Update : 11.2.0.3.5 (14727347)"

Created on 11 Jan 2013, 06:19:07 hrs PST8PDT

Bugs fixed:

15876003, 14275572, 13919095, 13696251, 13348650, 12659561, 13039908

14277586, 13987807, 14625969, 13938166, 13825231, 13036424, 12794268

13011520, 13569812, 12758736, 13000491, 13498267, 13077654, 13001901

13550689, 13430715, 13806545, 13634583, 11675721, 14082976, 14271305

12771830, 12538907, 13947200, 12996428, 14102704, 13066371, 13483672

12594616, 13879428, 13540563, 12897651, 12897902, 13241779, 12896850

12726222, 12829429, 12728585, 13079948, 12876314, 13090686, 12925041

12995950, 13251796, 12650672, 12398492, 12848480, 13582411, 13652088

12990582, 13857364, 12975811, 12917897, 13653178, 13082238, 12947871

13037709, 13371153, 12878750, 10114953, 11772838, 13058611, 13001955

14001941, 11836951, 12965049, 13440962, 12765467, 13727853, 13425727

12885323, 14407395, 13965075, 13339443, 12784559, 14242977, 13332363

13074261, 12971251, 13811209, 12709476, 14168708, 14096821, 13993634

13460353, 13523527, 12857064, 13719731, 13396284, 12899169, 13111013

12558569, 13323698, 12867511, 12639013, 10260842, 12959140, 13085732

12829917, 10317921, 13843080, 12934171, 12849377, 12349553, 13924431

13869978, 12680491, 12914824, 13789135, 12730342, 13334158, 12950823

10418841, 12832204, 13355963, 13531373, 13776758, 12720728, 13620816

13002015, 13023609, 13024624, 12791719, 13886023, 13255295, 13821454

12782756, 14152875, 14100232, 14186070, 14569263, 13912373, 12873909

13845120, 14214257, 12914722, 13243172, 12842804, 13045518, 12765868

12772345, 12663376, 13345868, 14059576, 13683090, 12932852, 13889047

12695029, 14588629, 13146560, 13038806, 14251904, 14070200, 13820621

14304758, 13396356, 13697828, 13258062, 12834777, 14371335, 12996572

13941934, 14711358, 13657366, 13019958, 12810890, 13888719, 14637577

13502441, 13726162, 13880925, 14153867, 13506114, 12820045, 13604057

12823838, 13877508, 12823042, 14494305, 13582706, 13617861, 12825835

13263435, 13025879, 13853089, 14009845, 13410987, 13570879, 13637590

12827493, 13247273, 13068077

Rac system comprising of multiple nodes

Local node = rac11gnode1

Remote node = rac11gnode2

--------------------------------------------------------------------------------

OPatch succeeded.

Repeat the steps also on the second node.

Now we can start with the oracle software installation and creation of the database.