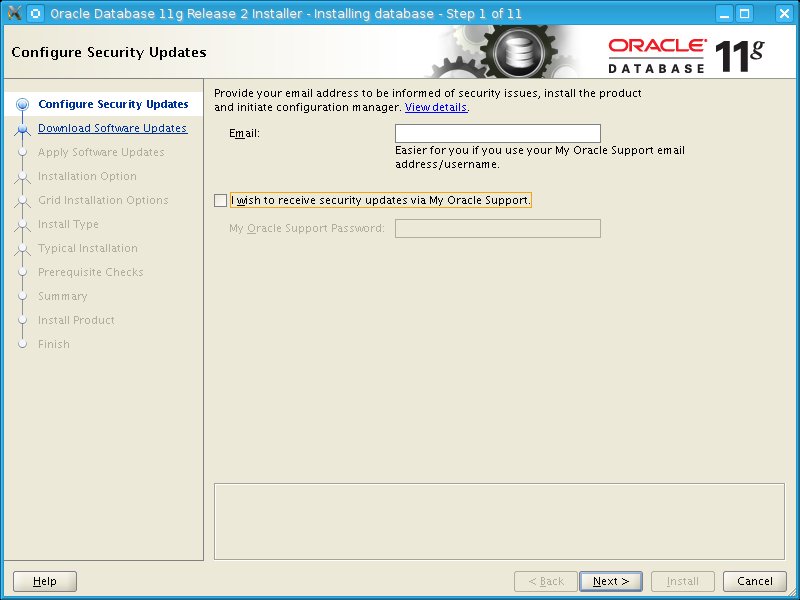

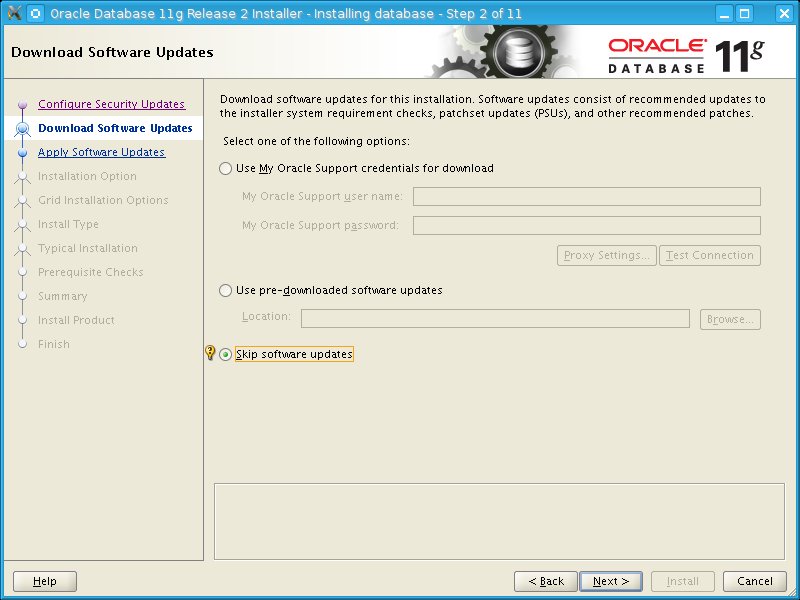

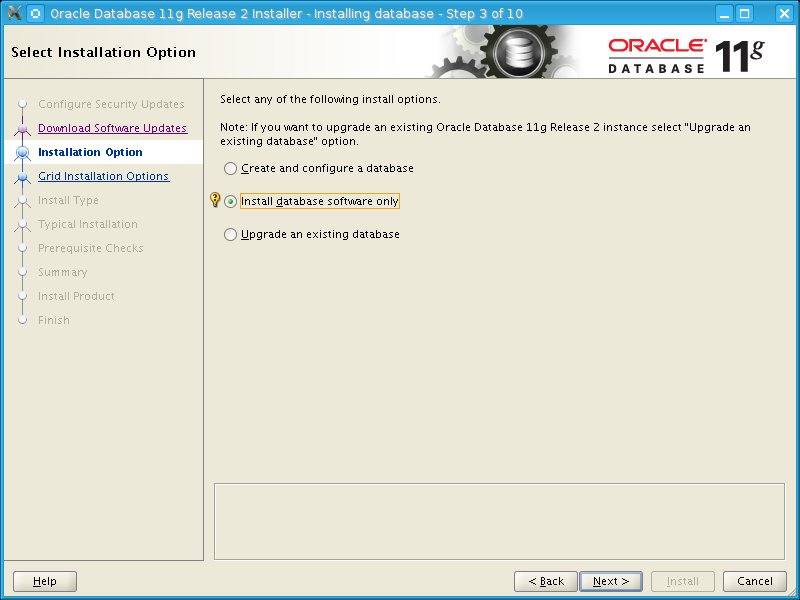

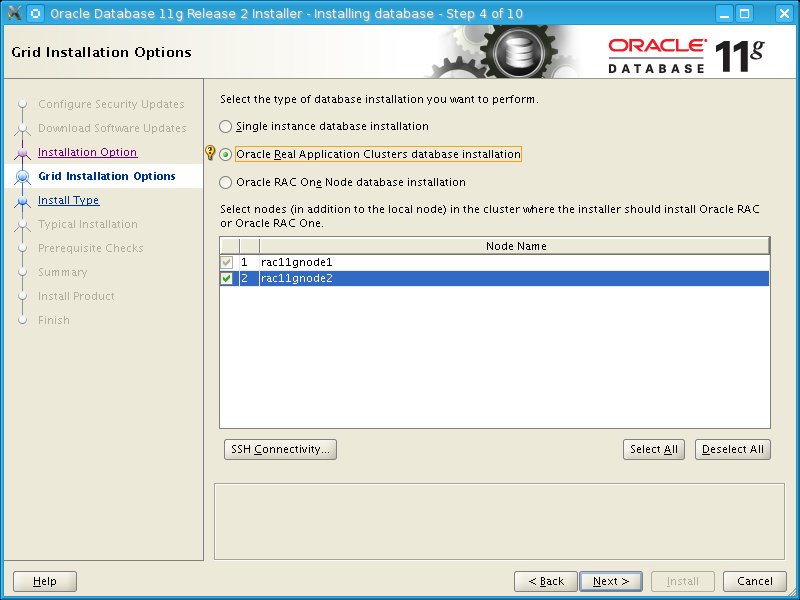

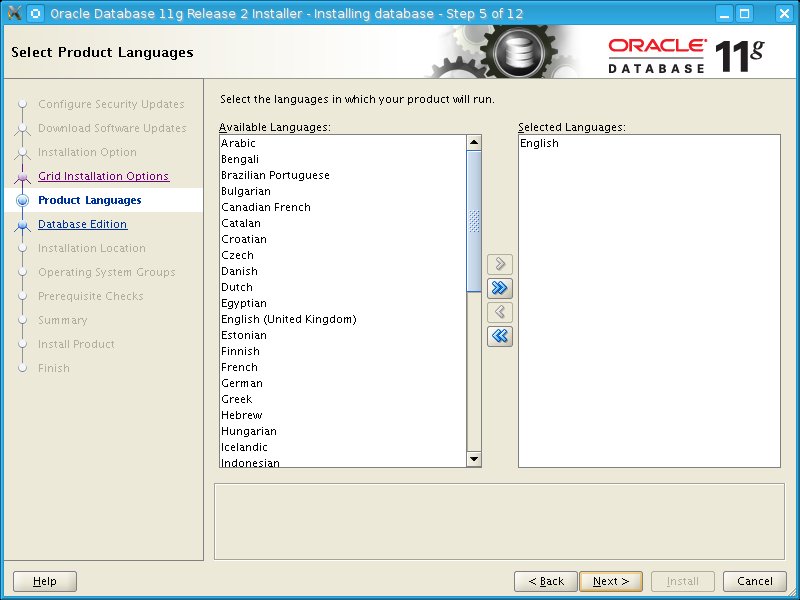

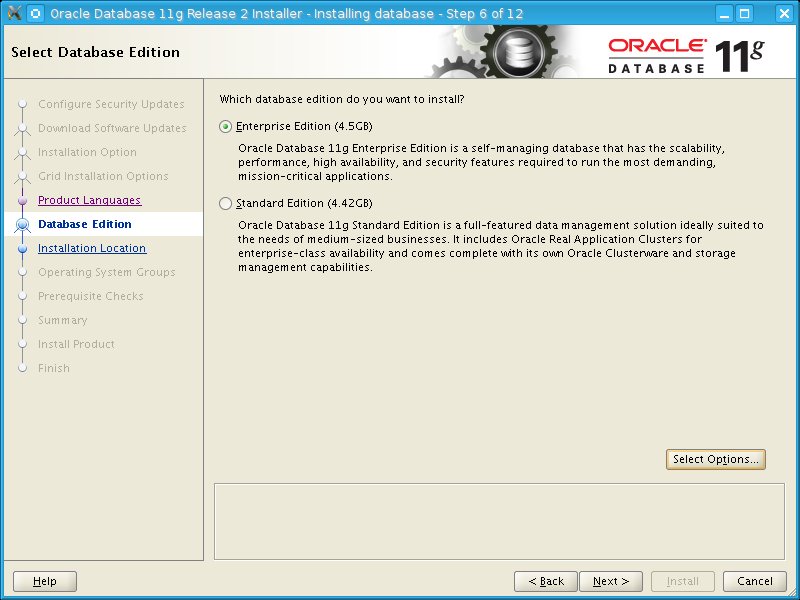

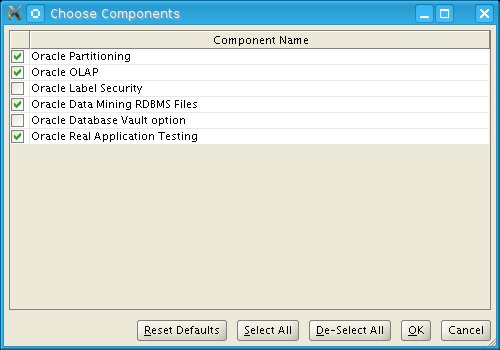

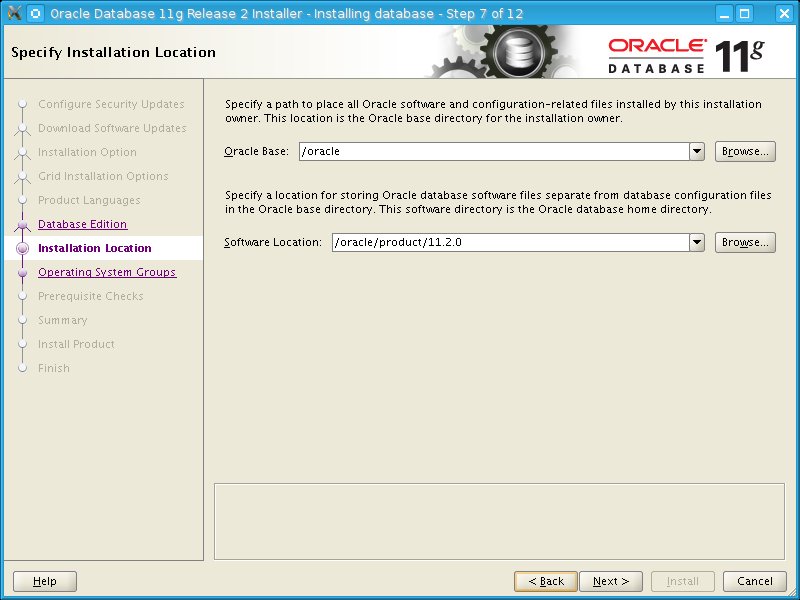

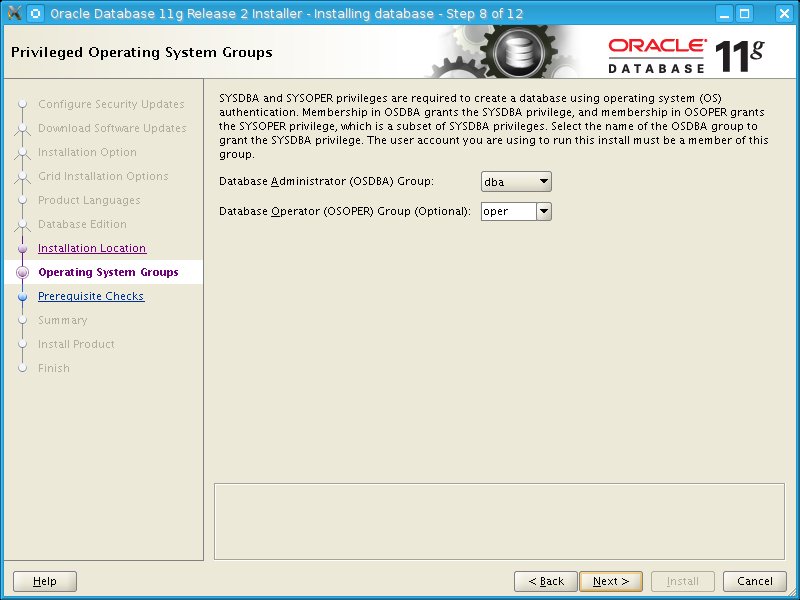

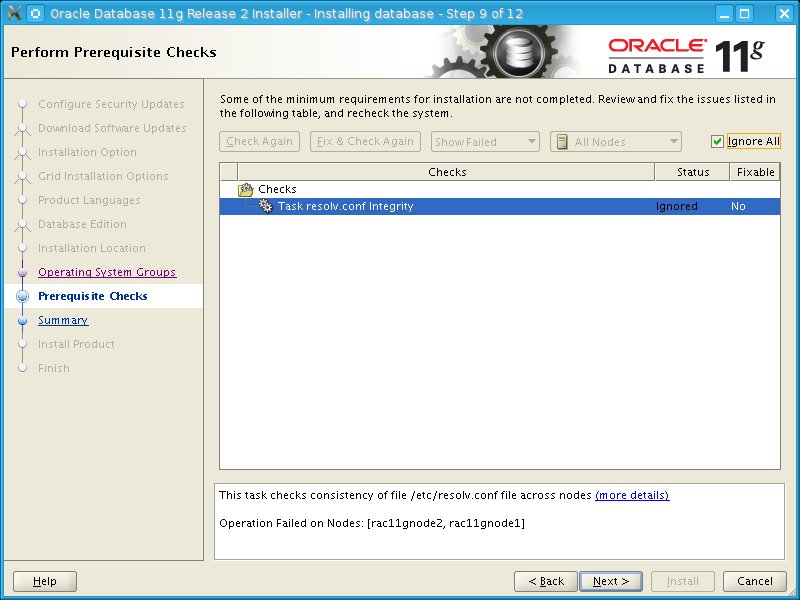

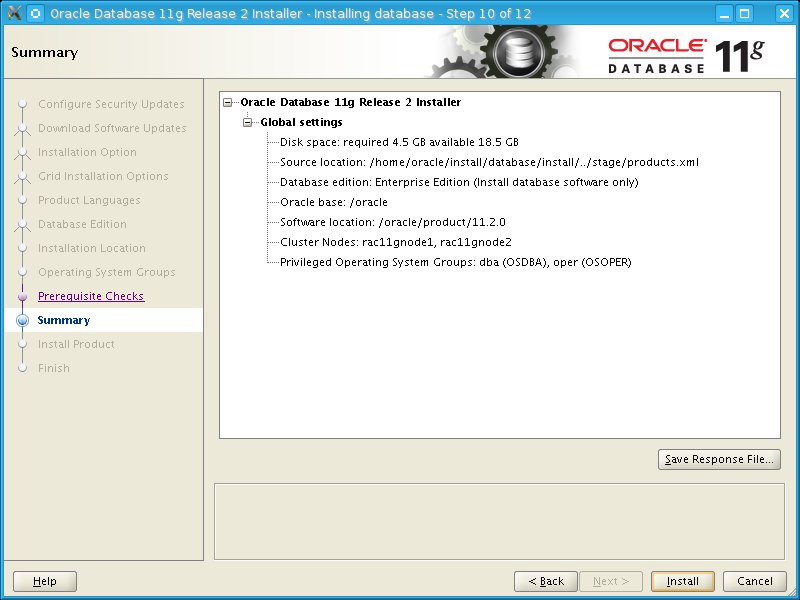

Now we can proceed with installation of the oracle software.

Unzip installation files and start the installation:

Now we can apply the latest PSU patch:

Copy the p6880880 patch to the $ORACLE_HOME directory and unzip it.

[oracle@rac11gnode1 14727310]$ opatch prereq CheckConflictAgainstOHWithDetail -ph ./

Oracle Interim Patch Installer version 11.2.0.3.3

Copyright (c) 2012, Oracle Corporation. All rights reserved.

PREREQ session

Oracle Home : /oracle/product/11.2.0

Central Inventory : /grid/oraInventory

from : /oracle/product/11.2.0/oraInst.loc

OPatch version : 11.2.0.3.3

OUI version : 11.2.0.3.0

Log file location : /oracle/product/11.2.0/cfgtoollogs/opatch/opatch2013-04-10_21-01-33PM_1.log

Invoking prereq "checkconflictagainstohwithdetail"

Prereq "checkConflictAgainstOHWithDetail" passed.

OPatch succeeded.

Apply the patch:

[oracle@rac11gnode1 14727310]$ opatch apply

Oracle Interim Patch Installer version 11.2.0.3.3

Copyright (c) 2012, Oracle Corporation. All rights reserved.

Oracle Home : /oracle/product/11.2.0

Central Inventory : /grid/oraInventory

from : /oracle/product/11.2.0/oraInst.loc

OPatch version : 11.2.0.3.3

OUI version : 11.2.0.3.0

Log file location : /oracle/product/11.2.0/cfgtoollogs/opatch/opatch2013-04-10_21-02-31PM_1.log

Verifying environment and performing prerequisite checks...

OPatch continues with these patches: 13343438 13696216 13923374 14275605 14727310

Do you want to proceed? [y|n]

y

User Responded with: Y

All checks passed.

Provide your email address to be informed of security issues, install and

initiate Oracle Configuration Manager. Easier for you if you use your My

Oracle Support Email address/User Name.

Visit http://www.oracle.com/support/policies.html for details.

Email address/User Name:

You have not provided an email address for notification of security issues.

Do you wish to remain uninformed of security issues ([Y]es, [N]o) [N]: Y

This node is part of an Oracle Real Application Cluster.

Remote nodes: 'rac11gnode2'

Local node: 'rac11gnode1'

Please shutdown Oracle instances running out of this ORACLE_HOME on the local system.

(Oracle Home = '/oracle/product/11.2.0')

Is the local system ready for patching? [y|n]

y

User Responded with: Y

Backing up files...

Applying sub-patch '13343438' to OH '/oracle/product/11.2.0'

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Verifying the update...

Applying sub-patch '13696216' to OH '/oracle/product/11.2.0'

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.sdo.locator, 11.2.0.3.0...

Patching component oracle.sysman.console.db, 11.2.0.3.0...

Patching component oracle.sysman.oms.core, 10.2.0.4.4...

Verifying the update...

Applying sub-patch '13923374' to OH '/oracle/product/11.2.0'

ApplySession: Optional component(s) [ oracle.network.cman, 11.2.0.3.0 ] not present in the Oracle Home or a higher version is found.

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Patching component oracle.network.rsf, 11.2.0.3.0...

Patching component oracle.network.listener, 11.2.0.3.0...

Patching component oracle.sysman.console.db, 11.2.0.3.0...

Verifying the update...

Applying sub-patch '14275605' to OH '/oracle/product/11.2.0'

ApplySession: Optional component(s) [ oracle.precomp.lang, 11.2.0.3.0 ] not present in the Oracle Home or a higher version is found.

Patching component oracle.network.client, 11.2.0.3.0...

Patching component oracle.network.rsf, 11.2.0.3.0...

Patching component oracle.precomp.common, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Patching component oracle.rdbms.rman, 11.2.0.3.0...

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms.util, 11.2.0.3.0...

Verifying the update...

Applying sub-patch '14727310' to OH '/oracle/product/11.2.0'

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Patching component oracle.rdbms.deconfig, 11.2.0.3.0...

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.sdo.locator, 11.2.0.3.0...

Patching component oracle.sysman.console.db, 11.2.0.3.0...

Patching component oracle.sysman.oms.core, 10.2.0.4.4...

Verifying the update...

OPatch found the word "warning" in the stderr of the make command.

Please look at this stderr. You can re-run this make command.

Stderr output:

ins_precomp.mk:19: warning: overriding commands for target `pcscfg.cfg'

/oracle/product/11.2.0/precomp/lib/env_precomp.mk:2160: warning: ignoring old commands for target `pcscfg.cfg'

/oracle/product/11.2.0/precomp/lib/ins_precomp.mk:19: warning: overriding commands for target `pcscfg.cfg'

/oracle/product/11.2.0/precomp/lib/env_precomp.mk:2160: warning: ignoring old commands for target `pcscfg.cfg'

Patching in rolling mode.

The node 'rac11gnode2' will be patched next.

Please shutdown Oracle instances running out of this ORACLE_HOME on 'rac11gnode2'.

(Oracle Home = '/oracle/product/11.2.0')

Is the node ready for patching? [y|n]

y

User Responded with: Y

Updating nodes 'rac11gnode2'

Apply-related files are:

FP = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_files.txt"

DP = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_dirs.txt"

MP = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/make_cmds.txt"

RC = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/remote_cmds.txt"

Instantiating the file "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_files.txt.instantiated" by replacing $ORACLE_HOME in "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_files.txt" with actual path.

Propagating files to remote nodes...

Instantiating the file "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_dirs.txt.instantiated" by replacing $ORACLE_HOME in "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_dirs.txt" with actual path.

Propagating directories to remote nodes...

Instantiating the file "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/make_cmds.txt.instantiated" by replacing $ORACLE_HOME in "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/make_cmds.txt" with actual path.

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/network/lib; /usr/bin/make -f ins_net_client.mk client_sharedlib ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk client_sharedlib ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk ioracle ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk irenamedg ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk inid ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/network/lib; /usr/bin/make -f ins_net_server.mk itnslsnr ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/precomp/lib; /usr/bin/make -f ins_precomp.mk proc ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/precomp/lib; /usr/bin/make -f ins_precomp.mk procob ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/plsql/lib; /usr/bin/make -f ins_plsql.mk iwrap ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk irman ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk iplshprof ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk iamdu ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk ikfed ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

RC file not exist. There are no commands to be run on the remote nodes.

The node 'rac11gnode2' has been patched. You can restart Oracle instances on it.

Composite patch 14727310 successfully applied.

OPatch Session completed with warnings.

Log file location: /oracle/product/11.2.0/cfgtoollogs/opatch/opatch2013-04-10_21-02-31PM_1.log

OPatch completed with warnings.

Now we can proceed and create the database.

the first thing we will do is to create a new diskgroup called DATA where the datafiles will resid:

[grid@rac11gnode1 ~]$ asmcmd

ASMCMD> ls -l

State Type Rebal Name

MOUNTED EXTERN N ACFS1/

Existing diskgroups:

SQL> select name,total_mb from v$asm_diskgroup;

NAME TOTAL_MB

------------------------------ ----------

ACFS1 1024

Available disks:

SQL> select path,mount_status,os_mb from v$asm_disk;

PATH MOUNT_S OS_MB

-------------------------------------------------- ------- ----------

/dev/oracleasm/disks/ASM8 CLOSED 7424

/dev/oracleasm/disks/ASM7 CLOSED 10240

/dev/oracleasm/disks/ASM6 CLOSED 5152

/dev/oracleasm/disks/ASM5 CLOSED 5152

/dev/oracleasm/disks/ASM4 CLOSED 5152

/dev/oracleasm/disks/ASM3 CLOSED 5152

/dev/oracleasm/disks/ASM2 CLOSED 5152

/dev/oracleasm/disks/ASM1 CLOSED 5152

/dev/oracleasm/disks/ACFS2 CLOSED 1024

/dev/oracleasm/disks/ACFS1 CACHED 1024

Now, we can create the DATA diskgroup:

SQL> create diskgroup DATA external redundancy disk '/dev/oracleasm/disks/ASM1','/dev/oracleasm/disks/ASM2' attribute 'COMPATIBLE.ASM'='11.2.0.0','COMPATIBLE.RDBMS'='11.2.0.0';

Diskgroup created.

We can see, that the diskgroup is mounted:

[grid@rac11gnode1 ~]$ crs_stat -t

Name Type Target State Host

------------------------------------------------------------

ora.ACFS1.dg ora....up.type ONLINE ONLINE rac11gnode1

ora.DATA.dg ora....up.type ONLINE ONLINE rac11gnode1

We can prepare parameter file and create the database:

Create initSID.ora file:

db_block_size=8192

cluster_database_instances=2

remote_listener=LISTENERS_RACDB

open_cursors=500

db_domain=example.com

db_name=RACDB

db_create_file_dest=+DATA

compatible=11.2.0.3

diagnostic_dest=/oracle

processes=400

sga_max_size=800M

sga_target=800M

autit_file_dest=/oracle/admin/adump

audit_trail=db

remote_login_passwordfile=exclusive

pga_aggregate_target=500M

RACDB1.instance_number=1

RACDB2.instance_number=2

RACDB1.thread=1

RACDB2.thread=2

RACDB1.undo_tablespace=undotbs1

RACDB2.undo_tablespace=undotbs2

undo_management=auto

Create password file:

[oracle@rac11gnode1 dbs]$ orapwd file=$ORACLE_HOME/dbs/orapw$ORACLE_SID

Enter password for SYS:

Add following to tnsnames.ora:

LISTENERS_RACDB =

(ADDRESS_LIST =

(ADDRESS = (PROTOCOL = tcp)(HOST = rac11gnode1-vip)(PORT=1521))

(ADDRESS = (PROTOCOL = tcp)(HOST= rac11gnode2-vip)(PORT=1521))

)

Create directory on the ASM disk:

[grid@rac11gnode1 ~]$ asmcmd

ASMCMD> ls

ACFS1/

DATA/

ASMCMD> cd DATA

ASMCMD> ls

ASMCMD> mkdir RACBD

Startup the instance:

[oracle@rac11gnode1 ~]$ sqlplus "/as sysdba"

SQL*Plus: Release 11.2.0.3.0 Production on Sun Apr 14 14:39:31 2013

Copyright (c) 1982, 2011, Oracle. All rights reserved.

Connected to an idle instance.

SQL> startup nomount;

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 230690176 bytes

Database Buffers 599785472 bytes

Redo Buffers 2396160 bytes

Create the database:

SQL> ed

Wrote file afiedt.buf

1 create database RACDB

2 user sys identified by itsids41

3 user system identified by itsids41

4 maxinstances 5

5 maxlogfiles 10

6 maxlogmembers 5

7 maxdatafiles 100

8 character set al32utf8

9 national character set al16utf16

10 datafile size 500M extent management local

11 sysaux datafile size 500M

12 smallfile default temporary tablespace tamp tempfile size 100M uniform size 1M

13 smallfile undo tablespace undotbs1 datafile size 100M

14 logfile group 1 size 100M,

15* group 2 size 100M

SQL> /

Database created.

Insert control_files parameter to init file:

SQL> set linesize 2048

SQL> column ctl_files NEW_VALUE ctl_files

SQL> select concat('control_files=''', concat(replace(value, ', ', ''','''), '''')) ctl_files from v$parameter where name ='control_files'; SQL> host echo &ctl_files >> $ORACLE_HOME/dbs/initRACDB.ora;

Create the undo tablespace for the second instance:

SQL> create smallfile undo tablespace undotbs2 datafile size 100M;

Tablespace created.

Run following scripts as the sys user:

@?/rdbms/admin/catalog.sql

@?/rdbms/admin/catblock.sql

@?/rdbms/admin/catproc.sql

Next start following scripts as system user:

@?/sqlplus/admin/pupbld.sql

@?/sqlplus/admin/help/hlpbld.sql helpus.sql

Now we will create the FRA diskgroup:

SQL> create diskgroup FRA external redundancy disk '/dev/oracleasm/disks/ASM8' attribute 'COMPATIBLE.ASM'='11.2.0.0.0','COMPATIBLE.RDBMS'='11.2.0.0.0';

Diskgroup created.

Add the logfile group for the second instance:

SQL> alter system set db_create_online_log_dest_1='+DATA';

System altered.

SQL> alter system set db_create_online_log_dest_2='+FRA';

System altered.

SQL> alter database add logfile thread 2 group 3 size 100M, group 4 size 100M;

Database altered.

SQL> alter database enable public thread 2;

Database altered.

Create spfile:

SQL> create spfile='+DATA/RACDB/spfileRACDB.ora' from pfile;

File created.

Create initSID.ora file swith following entry:

SPFILE='+DATA/DARCB/spfileRACDB.ora' and copy it to the second host.

Now mount the diskgroups on second node in the +ASM2:

SQL> alter diskgroup data mount;

Diskgroup altered.

SQL> alter diskgroup fra mount;

Diskgroup altered.

Restart the database on second node and set following parameter in the spfile:

SQL> alter system set cluster_database=true scope=spfile sid='*';

System altered.

SQL> shu immediate;

Database closed.

Database dismounted.

ORACLE instance shut down.

SQL> startup

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 230690176 bytes

Database Buffers 599785472 bytes

Redo Buffers 2396160 bytes

Database mounted.

Database opened.

Startup the instance again.

Now, you can startup the database on second node:

SQL> startup

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 293604736 bytes

Database Buffers 536870912 bytes

Redo Buffers 2396160 bytes

Database mounted.

Database opened.

Check if everything is OK:

SQL> select instance_name from gv$instance;

INSTANCE_NAME

----------------

RACDB1

RACDB2

Now we will register the databases and listeners to CRS:

Be sure, that all instances are down.

Add instances to CRS:

[oracle@rac11gnode1 admin]$ srvctl add database -d RACDB -o $ORACLE_HOME -m example.com

[oracle@rac11gnode1 admin]$ srvctl add instance -d RACDB -i RACDB1 -n rac11gnode1

[oracle@rac11gnode1 admin]$ srvctl add instance -d RACDB -i RACDB2 -n rac11gnode2

You can see the database resource in the crs_stat:

ora....de2.vip ora....t1.type ONLINE ONLINE rac11gnode2

ora.racdb.db ora....se.type ONLINE ONLINE rac11gnode1

ora.scan1.vip ora....ip.type ONLINE ONLINE rac11gnode2

ora.scan2.vip ora....ip.type ONLINE ONLINE rac11gnode1

ora.scan3.vip ora....ip.type ONLINE ONLINE rac11gnode1

Check the status:

[oracle@rac11gnode1 admin]$ srvctl status db -d RACDB

Instance RACDB1 is running on node rac11gnode1

Instance RACDB2 is running on node rac11gnode2

Now I will add one logfile member to group 1 and 2, because they have only one memner:

SQL> alter database add logfile member '+FRA' to '+DATA/racdb/onlinelog/group_1.257.812731745';

Database altered.

SQL> alter database add logfile member '+FRA' to '+DATA/racdb/onlinelog/group_2.258.812731749';

Database altered.

SQL> select group#,member from v$logfile order by 1;

GROUP# MEMBER

---------- ------------------------------------------------------------

1 +FRA/racdb/onlinelog/group_1.258.812750999

1 +DATA/racdb/onlinelog/group_1.257.812731745

2 +DATA/racdb/onlinelog/group_2.258.812731749

2 +FRA/racdb/onlinelog/group_2.259.812751065

3 +DATA/racdb/onlinelog/group_3.264.812744117

3 +FRA/racdb/onlinelog/group_3.256.812744121

4 +DATA/racdb/onlinelog/group_4.265.812744123

4 +FRA/racdb/onlinelog/group_4.257.812744127

Now, we will switch database to archivelog mode:

I will add one disk to FRA diskgroup:

SQL> alter diskgroup FRA add disk '/dev/oracleasm/disks/ASM7';

Diskgroup altered.

SQL> select INST_ID, OPERATION, STATE, POWER, SOFAR, EST_WORK, EST_RATE, EST_MINUTES from GV$ASM_OPERATION where GROUP_NUMBER=1;

no rows selected

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS1 1024 628

DATA 10304 8465

FRA 17664 17152

Now set the archiving parameters:

SQL> alter system set cluster_database=false scope=spfile sid='*';

System altered.

SQL> alter system set db_recovery_file_dest='+FRA' scope=spfile sid='*';

System altered.

SQL> alter system set db_recovery_file_dest_size=15G scope=spfile sid='*';

System altered.

Stop database:

[oracle@rac11gnode1 admin]$ srvctl stop db -d RACDB

[oracle@rac11gnode1 admin]$ srvctl status db -d RACDB

Instance RACDB1 is not running on node rac11gnode1

Instance RACDB2 is not running on node rac11gnode2

Startup database locally, change archivelog mode :

[oracle@rac11gnode1 admin]$ sqlplus "/as sysdba"

SQL*Plus: Release 11.2.0.3.0 Production on Sun Apr 14 20:53:08 2013

Copyright (c) 1982, 2011, Oracle. All rights reserved.

Connected to an idle instance.

SQL> startup mount;

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 230690176 bytes

Database Buffers 599785472 bytes

Redo Buffers 2396160 bytes

Database mounted.

SQL> alter database archivelog;

Database altered.

Enable cluster_database parameter and restart database:

SQL> alter system set cluster_database=true scope=spfile sid='*';

System altered.

SQL> shu immediate;

ORA-01109: database not open

Database dismounted.

ORACLE instance shut down.

[oracle@rac11gnode1 admin]$ srvctl start db -d RACDB

[oracle@rac11gnode1 admin]$ srvctl status db -d RACDB

Instance RACDB1 is running on node rac11gnode1

Instance RACDB2 is running on node rac11gnode2

Copy the p6880880 patch to the $ORACLE_HOME directory and unzip it.

[oracle@rac11gnode1 14727310]$ opatch prereq CheckConflictAgainstOHWithDetail -ph ./

Oracle Interim Patch Installer version 11.2.0.3.3

Copyright (c) 2012, Oracle Corporation. All rights reserved.

PREREQ session

Oracle Home : /oracle/product/11.2.0

Central Inventory : /grid/oraInventory

from : /oracle/product/11.2.0/oraInst.loc

OPatch version : 11.2.0.3.3

OUI version : 11.2.0.3.0

Log file location : /oracle/product/11.2.0/cfgtoollogs/opatch/opatch2013-04-10_21-01-33PM_1.log

Invoking prereq "checkconflictagainstohwithdetail"

Prereq "checkConflictAgainstOHWithDetail" passed.

OPatch succeeded.

Apply the patch:

[oracle@rac11gnode1 14727310]$ opatch apply

Oracle Interim Patch Installer version 11.2.0.3.3

Copyright (c) 2012, Oracle Corporation. All rights reserved.

Oracle Home : /oracle/product/11.2.0

Central Inventory : /grid/oraInventory

from : /oracle/product/11.2.0/oraInst.loc

OPatch version : 11.2.0.3.3

OUI version : 11.2.0.3.0

Log file location : /oracle/product/11.2.0/cfgtoollogs/opatch/opatch2013-04-10_21-02-31PM_1.log

Verifying environment and performing prerequisite checks...

OPatch continues with these patches: 13343438 13696216 13923374 14275605 14727310

Do you want to proceed? [y|n]

y

User Responded with: Y

All checks passed.

Provide your email address to be informed of security issues, install and

initiate Oracle Configuration Manager. Easier for you if you use your My

Oracle Support Email address/User Name.

Visit http://www.oracle.com/support/policies.html for details.

Email address/User Name:

You have not provided an email address for notification of security issues.

Do you wish to remain uninformed of security issues ([Y]es, [N]o) [N]: Y

This node is part of an Oracle Real Application Cluster.

Remote nodes: 'rac11gnode2'

Local node: 'rac11gnode1'

Please shutdown Oracle instances running out of this ORACLE_HOME on the local system.

(Oracle Home = '/oracle/product/11.2.0')

Is the local system ready for patching? [y|n]

y

User Responded with: Y

Backing up files...

Applying sub-patch '13343438' to OH '/oracle/product/11.2.0'

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Verifying the update...

Applying sub-patch '13696216' to OH '/oracle/product/11.2.0'

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.sdo.locator, 11.2.0.3.0...

Patching component oracle.sysman.console.db, 11.2.0.3.0...

Patching component oracle.sysman.oms.core, 10.2.0.4.4...

Verifying the update...

Applying sub-patch '13923374' to OH '/oracle/product/11.2.0'

ApplySession: Optional component(s) [ oracle.network.cman, 11.2.0.3.0 ] not present in the Oracle Home or a higher version is found.

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Patching component oracle.network.rsf, 11.2.0.3.0...

Patching component oracle.network.listener, 11.2.0.3.0...

Patching component oracle.sysman.console.db, 11.2.0.3.0...

Verifying the update...

Applying sub-patch '14275605' to OH '/oracle/product/11.2.0'

ApplySession: Optional component(s) [ oracle.precomp.lang, 11.2.0.3.0 ] not present in the Oracle Home or a higher version is found.

Patching component oracle.network.client, 11.2.0.3.0...

Patching component oracle.network.rsf, 11.2.0.3.0...

Patching component oracle.precomp.common, 11.2.0.3.0...

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Patching component oracle.rdbms.rman, 11.2.0.3.0...

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.rdbms.util, 11.2.0.3.0...

Verifying the update...

Applying sub-patch '14727310' to OH '/oracle/product/11.2.0'

Patching component oracle.rdbms, 11.2.0.3.0...

Patching component oracle.rdbms.dbscripts, 11.2.0.3.0...

Patching component oracle.rdbms.deconfig, 11.2.0.3.0...

Patching component oracle.rdbms.rsf, 11.2.0.3.0...

Patching component oracle.sdo.locator, 11.2.0.3.0...

Patching component oracle.sysman.console.db, 11.2.0.3.0...

Patching component oracle.sysman.oms.core, 10.2.0.4.4...

Verifying the update...

OPatch found the word "warning" in the stderr of the make command.

Please look at this stderr. You can re-run this make command.

Stderr output:

ins_precomp.mk:19: warning: overriding commands for target `pcscfg.cfg'

/oracle/product/11.2.0/precomp/lib/env_precomp.mk:2160: warning: ignoring old commands for target `pcscfg.cfg'

/oracle/product/11.2.0/precomp/lib/ins_precomp.mk:19: warning: overriding commands for target `pcscfg.cfg'

/oracle/product/11.2.0/precomp/lib/env_precomp.mk:2160: warning: ignoring old commands for target `pcscfg.cfg'

Patching in rolling mode.

The node 'rac11gnode2' will be patched next.

Please shutdown Oracle instances running out of this ORACLE_HOME on 'rac11gnode2'.

(Oracle Home = '/oracle/product/11.2.0')

Is the node ready for patching? [y|n]

y

User Responded with: Y

Updating nodes 'rac11gnode2'

Apply-related files are:

FP = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_files.txt"

DP = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_dirs.txt"

MP = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/make_cmds.txt"

RC = "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/remote_cmds.txt"

Instantiating the file "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_files.txt.instantiated" by replacing $ORACLE_HOME in "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_files.txt" with actual path.

Propagating files to remote nodes...

Instantiating the file "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_dirs.txt.instantiated" by replacing $ORACLE_HOME in "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/copy_dirs.txt" with actual path.

Propagating directories to remote nodes...

Instantiating the file "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/make_cmds.txt.instantiated" by replacing $ORACLE_HOME in "/oracle/product/11.2.0/.patch_storage/NApply/2013-04-10_21-02-31PM/rac/make_cmds.txt" with actual path.

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/network/lib; /usr/bin/make -f ins_net_client.mk client_sharedlib ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk client_sharedlib ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk ioracle ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk irenamedg ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk inid ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/network/lib; /usr/bin/make -f ins_net_server.mk itnslsnr ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/precomp/lib; /usr/bin/make -f ins_precomp.mk proc ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/precomp/lib; /usr/bin/make -f ins_precomp.mk procob ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/plsql/lib; /usr/bin/make -f ins_plsql.mk iwrap ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk irman ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk iplshprof ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk iamdu ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

Running command on remote node 'rac11gnode2':

cd /oracle/product/11.2.0/rdbms/lib; /usr/bin/make -f ins_rdbms.mk ikfed ORACLE_HOME=/oracle/product/11.2.0 || echo REMOTE_MAKE_FAILED::>&2

RC file not exist. There are no commands to be run on the remote nodes.

The node 'rac11gnode2' has been patched. You can restart Oracle instances on it.

Composite patch 14727310 successfully applied.

OPatch Session completed with warnings.

Log file location: /oracle/product/11.2.0/cfgtoollogs/opatch/opatch2013-04-10_21-02-31PM_1.log

OPatch completed with warnings.

Now we can proceed and create the database.

the first thing we will do is to create a new diskgroup called DATA where the datafiles will resid:

[grid@rac11gnode1 ~]$ asmcmd

ASMCMD> ls -l

State Type Rebal Name

MOUNTED EXTERN N ACFS1/

Existing diskgroups:

SQL> select name,total_mb from v$asm_diskgroup;

NAME TOTAL_MB

------------------------------ ----------

ACFS1 1024

Available disks:

SQL> select path,mount_status,os_mb from v$asm_disk;

PATH MOUNT_S OS_MB

-------------------------------------------------- ------- ----------

/dev/oracleasm/disks/ASM8 CLOSED 7424

/dev/oracleasm/disks/ASM7 CLOSED 10240

/dev/oracleasm/disks/ASM6 CLOSED 5152

/dev/oracleasm/disks/ASM5 CLOSED 5152

/dev/oracleasm/disks/ASM4 CLOSED 5152

/dev/oracleasm/disks/ASM3 CLOSED 5152

/dev/oracleasm/disks/ASM2 CLOSED 5152

/dev/oracleasm/disks/ASM1 CLOSED 5152

/dev/oracleasm/disks/ACFS2 CLOSED 1024

/dev/oracleasm/disks/ACFS1 CACHED 1024

Now, we can create the DATA diskgroup:

SQL> create diskgroup DATA external redundancy disk '/dev/oracleasm/disks/ASM1','/dev/oracleasm/disks/ASM2' attribute 'COMPATIBLE.ASM'='11.2.0.0','COMPATIBLE.RDBMS'='11.2.0.0';

Diskgroup created.

We can see, that the diskgroup is mounted:

[grid@rac11gnode1 ~]$ crs_stat -t

Name Type Target State Host

------------------------------------------------------------

ora.ACFS1.dg ora....up.type ONLINE ONLINE rac11gnode1

ora.DATA.dg ora....up.type ONLINE ONLINE rac11gnode1

We can prepare parameter file and create the database:

Create initSID.ora file:

db_block_size=8192

cluster_database_instances=2

remote_listener=LISTENERS_RACDB

open_cursors=500

db_domain=example.com

db_name=RACDB

db_create_file_dest=+DATA

compatible=11.2.0.3

diagnostic_dest=/oracle

processes=400

sga_max_size=800M

sga_target=800M

autit_file_dest=/oracle/admin/adump

audit_trail=db

remote_login_passwordfile=exclusive

pga_aggregate_target=500M

RACDB1.instance_number=1

RACDB2.instance_number=2

RACDB1.thread=1

RACDB2.thread=2

RACDB1.undo_tablespace=undotbs1

RACDB2.undo_tablespace=undotbs2

undo_management=auto

Create password file:

[oracle@rac11gnode1 dbs]$ orapwd file=$ORACLE_HOME/dbs/orapw$ORACLE_SID

Enter password for SYS:

Add following to tnsnames.ora:

LISTENERS_RACDB =

(ADDRESS_LIST =

(ADDRESS = (PROTOCOL = tcp)(HOST = rac11gnode1-vip)(PORT=1521))

(ADDRESS = (PROTOCOL = tcp)(HOST= rac11gnode2-vip)(PORT=1521))

)

Create directory on the ASM disk:

[grid@rac11gnode1 ~]$ asmcmd

ASMCMD> ls

ACFS1/

DATA/

ASMCMD> cd DATA

ASMCMD> ls

ASMCMD> mkdir RACBD

Startup the instance:

[oracle@rac11gnode1 ~]$ sqlplus "/as sysdba"

SQL*Plus: Release 11.2.0.3.0 Production on Sun Apr 14 14:39:31 2013

Copyright (c) 1982, 2011, Oracle. All rights reserved.

Connected to an idle instance.

SQL> startup nomount;

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 230690176 bytes

Database Buffers 599785472 bytes

Redo Buffers 2396160 bytes

Create the database:

SQL> ed

Wrote file afiedt.buf

1 create database RACDB

2 user sys identified by itsids41

3 user system identified by itsids41

4 maxinstances 5

5 maxlogfiles 10

6 maxlogmembers 5

7 maxdatafiles 100

8 character set al32utf8

9 national character set al16utf16

10 datafile size 500M extent management local

11 sysaux datafile size 500M

12 smallfile default temporary tablespace tamp tempfile size 100M uniform size 1M

13 smallfile undo tablespace undotbs1 datafile size 100M

14 logfile group 1 size 100M,

15* group 2 size 100M

SQL> /

Database created.

Insert control_files parameter to init file:

SQL> set linesize 2048

SQL> column ctl_files NEW_VALUE ctl_files

SQL> select concat('control_files=''', concat(replace(value, ', ', ''','''), '''')) ctl_files from v$parameter where name ='control_files'; SQL> host echo &ctl_files >> $ORACLE_HOME/dbs/initRACDB.ora;

Create the undo tablespace for the second instance:

SQL> create smallfile undo tablespace undotbs2 datafile size 100M;

Tablespace created.

Run following scripts as the sys user:

@?/rdbms/admin/catalog.sql

@?/rdbms/admin/catblock.sql

@?/rdbms/admin/catproc.sql

Next start following scripts as system user:

@?/sqlplus/admin/pupbld.sql

@?/sqlplus/admin/help/hlpbld.sql helpus.sql

Now we will create the FRA diskgroup:

SQL> create diskgroup FRA external redundancy disk '/dev/oracleasm/disks/ASM8' attribute 'COMPATIBLE.ASM'='11.2.0.0.0','COMPATIBLE.RDBMS'='11.2.0.0.0';

Diskgroup created.

Add the logfile group for the second instance:

SQL> alter system set db_create_online_log_dest_1='+DATA';

System altered.

SQL> alter system set db_create_online_log_dest_2='+FRA';

System altered.

SQL> alter database add logfile thread 2 group 3 size 100M, group 4 size 100M;

Database altered.

SQL> alter database enable public thread 2;

Database altered.

Create spfile:

SQL> create spfile='+DATA/RACDB/spfileRACDB.ora' from pfile;

File created.

Create initSID.ora file swith following entry:

SPFILE='+DATA/DARCB/spfileRACDB.ora' and copy it to the second host.

Now mount the diskgroups on second node in the +ASM2:

SQL> alter diskgroup data mount;

Diskgroup altered.

SQL> alter diskgroup fra mount;

Diskgroup altered.

Restart the database on second node and set following parameter in the spfile:

SQL> alter system set cluster_database=true scope=spfile sid='*';

System altered.

SQL> shu immediate;

Database closed.

Database dismounted.

ORACLE instance shut down.

SQL> startup

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 230690176 bytes

Database Buffers 599785472 bytes

Redo Buffers 2396160 bytes

Database mounted.

Database opened.

Startup the instance again.

Now, you can startup the database on second node:

SQL> startup

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 293604736 bytes

Database Buffers 536870912 bytes

Redo Buffers 2396160 bytes

Database mounted.

Database opened.

Check if everything is OK:

SQL> select instance_name from gv$instance;

INSTANCE_NAME

----------------

RACDB1

RACDB2

Now we will register the databases and listeners to CRS:

Be sure, that all instances are down.

Add instances to CRS:

[oracle@rac11gnode1 admin]$ srvctl add database -d RACDB -o $ORACLE_HOME -m example.com

[oracle@rac11gnode1 admin]$ srvctl add instance -d RACDB -i RACDB1 -n rac11gnode1

[oracle@rac11gnode1 admin]$ srvctl add instance -d RACDB -i RACDB2 -n rac11gnode2

You can see the database resource in the crs_stat:

ora....de2.vip ora....t1.type ONLINE ONLINE rac11gnode2

ora.racdb.db ora....se.type ONLINE ONLINE rac11gnode1

ora.scan1.vip ora....ip.type ONLINE ONLINE rac11gnode2

ora.scan2.vip ora....ip.type ONLINE ONLINE rac11gnode1

ora.scan3.vip ora....ip.type ONLINE ONLINE rac11gnode1

Check the status:

[oracle@rac11gnode1 admin]$ srvctl status db -d RACDB

Instance RACDB1 is running on node rac11gnode1

Instance RACDB2 is running on node rac11gnode2

Now I will add one logfile member to group 1 and 2, because they have only one memner:

SQL> alter database add logfile member '+FRA' to '+DATA/racdb/onlinelog/group_1.257.812731745';

Database altered.

SQL> alter database add logfile member '+FRA' to '+DATA/racdb/onlinelog/group_2.258.812731749';

Database altered.

SQL> select group#,member from v$logfile order by 1;

GROUP# MEMBER

---------- ------------------------------------------------------------

1 +FRA/racdb/onlinelog/group_1.258.812750999

1 +DATA/racdb/onlinelog/group_1.257.812731745

2 +DATA/racdb/onlinelog/group_2.258.812731749

2 +FRA/racdb/onlinelog/group_2.259.812751065

3 +DATA/racdb/onlinelog/group_3.264.812744117

3 +FRA/racdb/onlinelog/group_3.256.812744121

4 +DATA/racdb/onlinelog/group_4.265.812744123

4 +FRA/racdb/onlinelog/group_4.257.812744127

Now, we will switch database to archivelog mode:

I will add one disk to FRA diskgroup:

SQL> alter diskgroup FRA add disk '/dev/oracleasm/disks/ASM7';

Diskgroup altered.

SQL> select INST_ID, OPERATION, STATE, POWER, SOFAR, EST_WORK, EST_RATE, EST_MINUTES from GV$ASM_OPERATION where GROUP_NUMBER=1;

no rows selected

SQL> select name,total_mb,free_mb from v$asm_diskgroup;

NAME TOTAL_MB FREE_MB

------------------------------ ---------- ----------

ACFS1 1024 628

DATA 10304 8465

FRA 17664 17152

Now set the archiving parameters:

SQL> alter system set cluster_database=false scope=spfile sid='*';

System altered.

SQL> alter system set db_recovery_file_dest='+FRA' scope=spfile sid='*';

System altered.

SQL> alter system set db_recovery_file_dest_size=15G scope=spfile sid='*';

System altered.

Stop database:

[oracle@rac11gnode1 admin]$ srvctl stop db -d RACDB

[oracle@rac11gnode1 admin]$ srvctl status db -d RACDB

Instance RACDB1 is not running on node rac11gnode1

Instance RACDB2 is not running on node rac11gnode2

Startup database locally, change archivelog mode :

[oracle@rac11gnode1 admin]$ sqlplus "/as sysdba"

SQL*Plus: Release 11.2.0.3.0 Production on Sun Apr 14 20:53:08 2013

Copyright (c) 1982, 2011, Oracle. All rights reserved.

Connected to an idle instance.

SQL> startup mount;

ORACLE instance started.

Total System Global Area 835104768 bytes

Fixed Size 2232960 bytes

Variable Size 230690176 bytes

Database Buffers 599785472 bytes

Redo Buffers 2396160 bytes

Database mounted.

SQL> alter database archivelog;

Database altered.

Enable cluster_database parameter and restart database:

SQL> alter system set cluster_database=true scope=spfile sid='*';

System altered.

SQL> shu immediate;

ORA-01109: database not open

Database dismounted.

ORACLE instance shut down.

[oracle@rac11gnode1 admin]$ srvctl start db -d RACDB

[oracle@rac11gnode1 admin]$ srvctl status db -d RACDB

Instance RACDB1 is running on node rac11gnode1

Instance RACDB2 is running on node rac11gnode2